Turing Verify: A Multi-Model Forensic Engine for Scalable Document Verification

Architecture, Methodology, and Calibration of an AI-Driven Platform Defending Against 54 Attack Vectors Across 206 Countries and 23,029 Institutions — Featuring 250+ Forensic Checkpoints, Deep Verification, KYB/KYC Screening, and AI vs Human Benchmarking

Turing Space Inc. -- April 2026

Document Classification: Public Technical White Paper Version: 2.0 Date: April 3, 2026

Abstract

Document fraud represents a persistent and escalating threat to institutions that rely on credential verification for admissions, hiring, licensing, and regulatory compliance. The proliferation of generative AI tools has significantly lowered the barrier to producing convincing forgeries, rendering traditional manual and OCR-based verification approaches increasingly inadequate. This white paper presents Turing Verify, a forensic document verification platform that employs a multi-model AI architecture to systematically evaluate documents against 250+ forensic checkpoints calibrated against 54 catalogued attack vectors. The system indexes 23,029 institutions across 206 countries — including 56 live verification portals — supports 7 document categories spanning 98 subtypes, and implements a 7-strategy QR code scanning pipeline for extracting and cross-referencing embedded verification data. (The detailed taxonomy in §4 documents the v2.0 baseline of 45 U-rules and 36 AV-defenses; v2.1 expands the checkpoint set to 250+ and the catalogued attack vectors to 54.) A tiered scoring model produces structured verdicts supported by 12-section forensic PDF reports. The platform processes documents through a multi-stage pipeline: rapid pre-screening with a lightweight triage model, QR code extraction and portal verification, standard forensic analysis via a frontier multimodal model, and an optional Deep Verification mode that expands analysis to 13 forensic stages with 8 confidence dimensions, KYB (Know Your Business) issuer due diligence, and KYC (Know Your Customer) holder background screening. Country-specific validation modules enforce national ID checksum algorithms for 6 countries and business registration format validation for 9 countries, while MRZ parsing conforms to ICAO 9303 standards across TD1, TD2, and TD3 formats. A credit-based pricing system supports both standard (1 credit) and deep (5 credit) verifications, with aggressive prompt caching and tiered model routing to keep marginal compute predictable. Calibration against 15 ground-truth cases, continuous feedback integration, and a 200-document AI vs Human Inspector benchmark framework ensure that detection accuracy tracks the evolving forgery landscape. This paper details the system architecture, forensic methodology, scoring model, calibration framework, benchmark system, and comparative performance characteristics of the Turing Verify platform.

1. Introduction: The Scale and Cost of Document Fraud

1.1 The Global Fraud Landscape

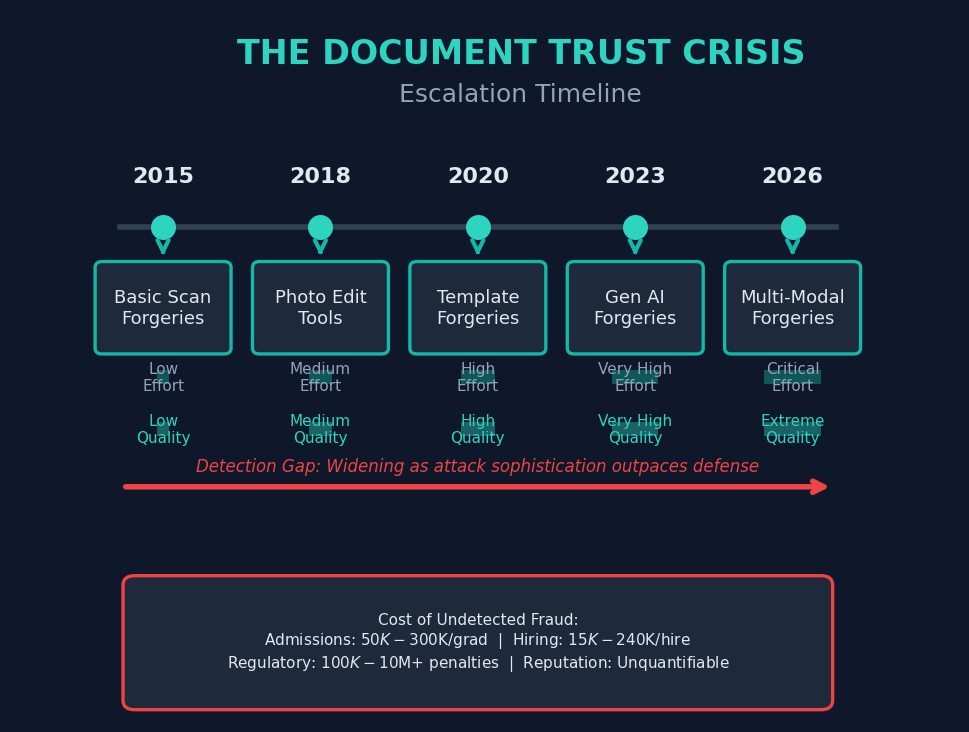

Document fraud is not a new phenomenon, but its scale, sophistication, and economic impact have accelerated dramatically in the past decade. Institutions across education, immigration, financial services, and corporate hiring depend on documentary evidence to establish identity, qualifications, and regulatory compliance. Each of these dependencies represents a potential attack surface for fraudulent actors.

Conservative estimates from international regulatory bodies place annual losses from document fraud in the tens of billions of dollars globally. The costs extend beyond direct financial losses to include reputational damage to institutions that unwittingly accept fraudulent credentials, erosion of public trust in credentialing systems, and downstream consequences when unqualified individuals occupy positions of responsibility.

The problem is compounded by the internationalization of credential flows. A single university admissions office may receive transcripts from institutions in 30 or more countries, each with distinct formatting conventions, grading systems, security features, and verification mechanisms. Corporate HR departments face similar challenges when evaluating professional certifications, government-issued IDs, and business registrations from diverse jurisdictions.

1.2 The Generative AI Accelerant

The emergence of commercially available generative AI tools has fundamentally altered the forgery landscape. Prior to 2022, producing a convincing document forgery required either specialized graphic design skills or access to expensive equipment. Template-based forgeries were detectable through layout analysis; photographic alterations left artifacts visible under magnification; and creating plausible institutional formatting demanded intimate knowledge of the target institution.

Generative AI has compressed the skill and cost requirements for each of these attack vectors. Text generation models can produce plausible institutional language, grading narratives, and administrative notations. Image generation and editing models can synthesize realistic seals, signatures, and watermarks. Multi-modal models can generate entire document images that approximate legitimate templates with troubling fidelity.

The democratization of forgery tools has produced a corresponding increase in the volume and variety of fraudulent documents entering verification pipelines. Institutions report significant increases in submissions flagged for review, while acknowledging that many sophisticated forgeries likely pass undetected through manual review processes.

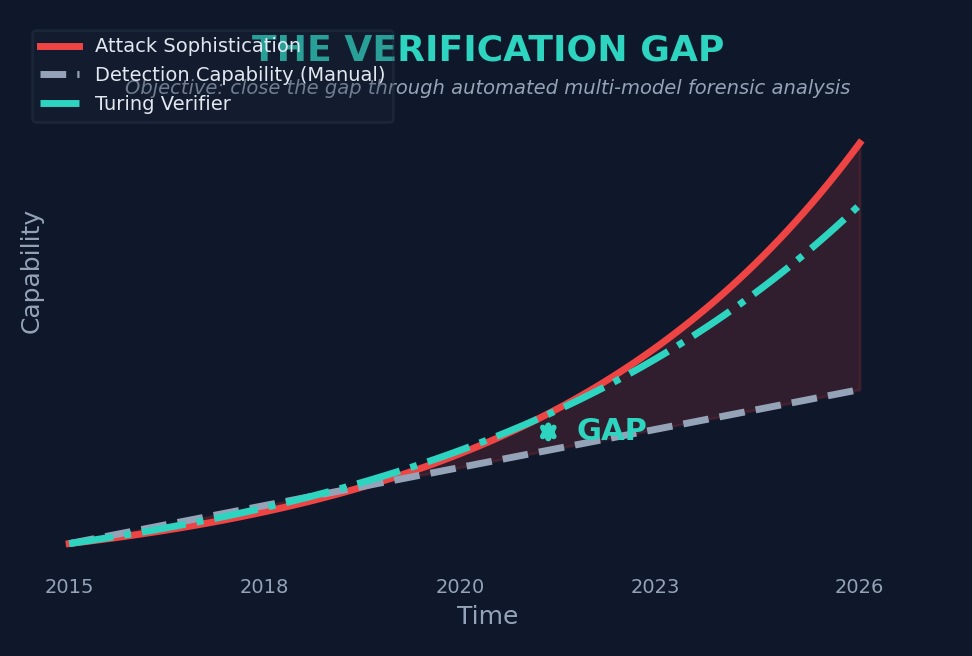

1.3 The Verification Gap

A verification gap exists between the sophistication of modern forgeries and the capabilities of prevailing verification methods. This gap manifests across several dimensions:

Volume versus throughput. Institutions processing thousands of credential submissions per cycle cannot allocate sufficient human review time to scrutinize each document at the level required to detect sophisticated forgeries.

Knowledge versus diversity. No individual reviewer possesses expertise across all document types, issuing countries, and institutional formats. A reviewer expert in North American academic transcripts may lack familiarity with the security features of Southeast Asian government IDs.

Consistency versus fatigue. Human reviewers exhibit declining accuracy over extended review sessions. Subtle anomalies that would be detected in the first hour of review may pass unnoticed in the fourth.

Speed versus depth. Organizations face pressure to process verifications quickly to meet enrollment deadlines, hiring timelines, or regulatory filing dates. This pressure incentivizes superficial review.

1.4 Design Objectives

Turing Verify was designed to address the verification gap through the following objectives:

- Comprehensive coverage: Evaluate documents against a taxonomy of forensic checkpoints that spans structural, semantic, external, and metadata dimensions.

- Attack-vector awareness: Explicitly model and defend against known forgery techniques rather than relying solely on anomaly detection.

- International scope: Support documents from multiple countries with country-specific validation logic where applicable.

- Auditability: Produce detailed forensic reports that document the reasoning behind each verdict, enabling human review and institutional decision-making.

- Scalability: Process documents at throughput rates compatible with institutional batch workflows while maintaining forensic depth.

- Adaptability: Incorporate a calibration framework that enables continuous refinement as new forgery techniques emerge.

The remainder of this paper details how these objectives are realized in the system architecture, forensic methodology, and operational design of the Turing Verify platform.

2. Background and Related Work

2.1 Evolution of Document Verification Approaches

Document verification has evolved through several generations of technology, each addressing some limitations of its predecessors while introducing new constraints.

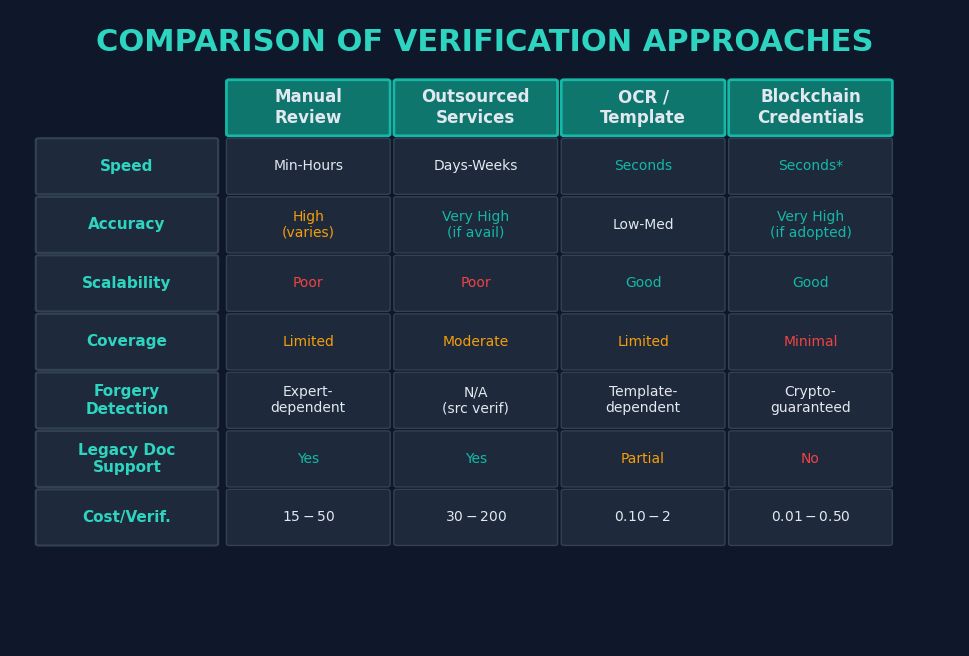

First Generation: Manual Expert Review. The earliest systematic approach to document verification relied on trained human reviewers examining documents for signs of tampering, inconsistent formatting, or implausible content. This approach offered high accuracy when reviewers possessed domain expertise relevant to the specific document type and issuing jurisdiction. However, manual review scales poorly, exhibits variability across reviewers and over time, and becomes impractical when documents originate from dozens of countries with distinct formatting conventions.

Second Generation: Outsourced Verification Services. To address scalability, many institutions outsourced verification to specialized firms that maintained databases of institutional contacts and employed staff to contact issuing organizations directly. While this approach provides high confidence when successful, it is slow (often requiring weeks), expensive per verification, and dependent on the responsiveness and existence of issuing institutions. For defunct institutions or those in jurisdictions with limited administrative infrastructure, outsourced verification frequently fails to produce definitive results.

Third Generation: OCR and Template Matching. Optical character recognition combined with template databases offered the first automated approach to verification. Systems would extract text from document images and compare structural elements against known templates. While faster than manual approaches, OCR-based systems struggle with documents that deviate from stored templates, produce high false-positive rates for legitimate but unusual formatting, and are vulnerable to forgeries that accurately replicate template structures while altering content.

Fourth Generation: Blockchain and Digital Credential Initiatives. Distributed ledger technology has been proposed as a foundational solution to credential verification by enabling issuing institutions to publish cryptographically signed credentials that recipients can share with verifiers. While architecturally sound, blockchain-based approaches require universal adoption by issuing institutions, a prerequisite that remains far from realized. The vast majority of credentials in circulation were issued on paper or as conventional digital documents without blockchain anchoring, leaving an enormous legacy verification problem that blockchain solutions do not address.

2.2 The Multi-Model AI Approach

Turing Verify represents a fifth-generation approach that combines the strengths of prior methods while mitigating their limitations. By employing multiple large language models with vision capabilities, the system can analyze document images with a sophistication that approximates expert human review while maintaining the speed and consistency of automated systems. Integration with external verification portals provides the source-verification capability of outsourced services without the latency and cost overhead.

The use of multiple AI models serves several purposes beyond redundancy. Different models exhibit complementary strengths: some excel at rapid pattern recognition suitable for pre-screening, while others demonstrate superior reasoning capabilities for complex forensic analysis. By routing documents through a tiered pipeline that matches model capabilities to task complexity, the system optimizes both accuracy and cost efficiency.

2.3 Positioning Within the Verification Ecosystem

Turing Verify is not intended to replace all forms of verification. For documents anchored to blockchain credential systems, cryptographic verification remains the gold standard. For cases requiring legal-grade authentication, human expert review with physical document examination remains necessary. Turing Verify addresses the large and growing middle ground: high-volume verification workflows where documents arrive as digital images, originate from diverse jurisdictions, and require systematic forensic analysis at speeds compatible with institutional timelines.

3. System Architecture

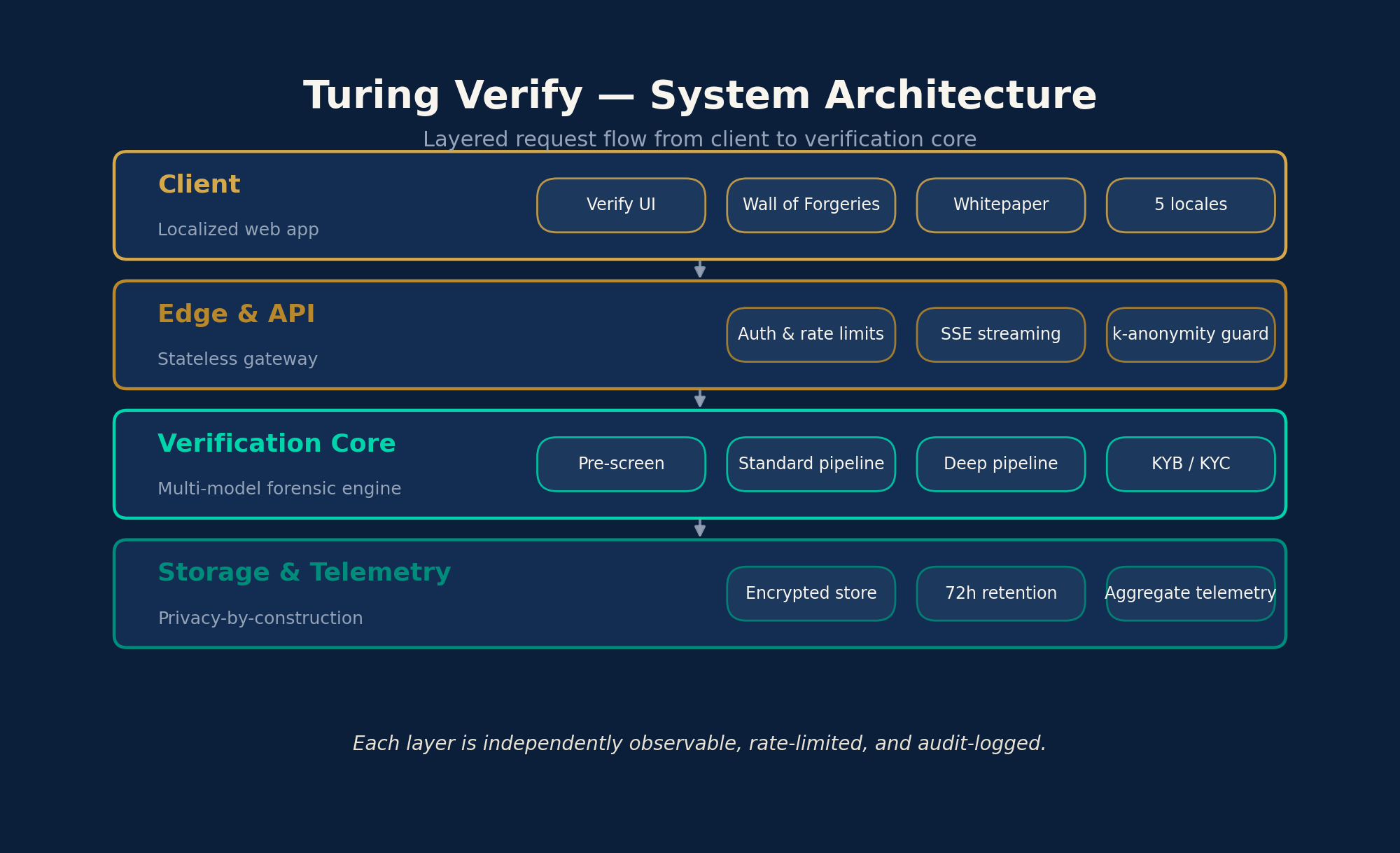

3.1 Architectural Overview

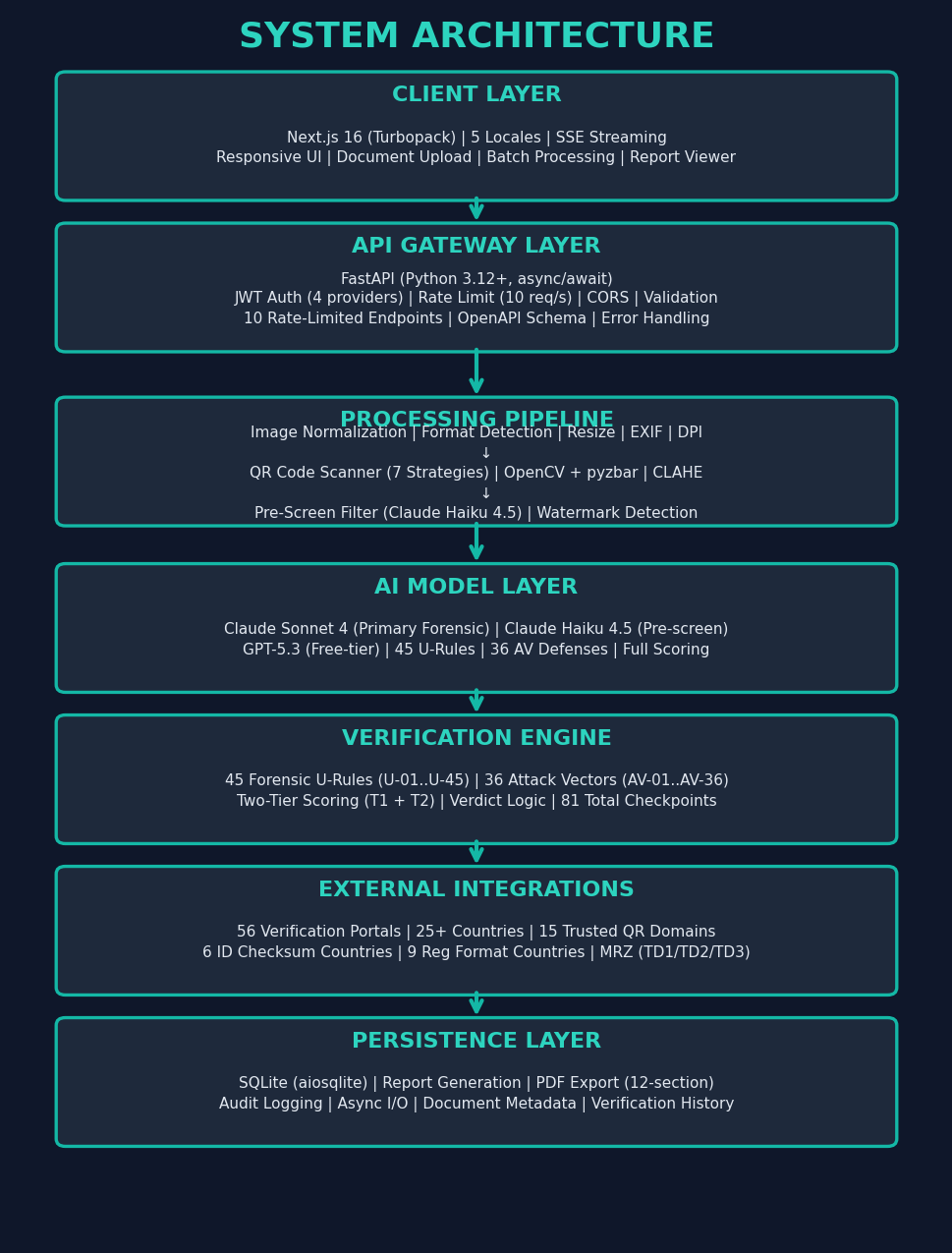

The Turing Verify platform follows a layered architecture that separates concerns across client presentation, API management, processing orchestration, AI inference, external integration, and data persistence. Each layer communicates through well-defined interfaces, enabling independent scaling and evolution.

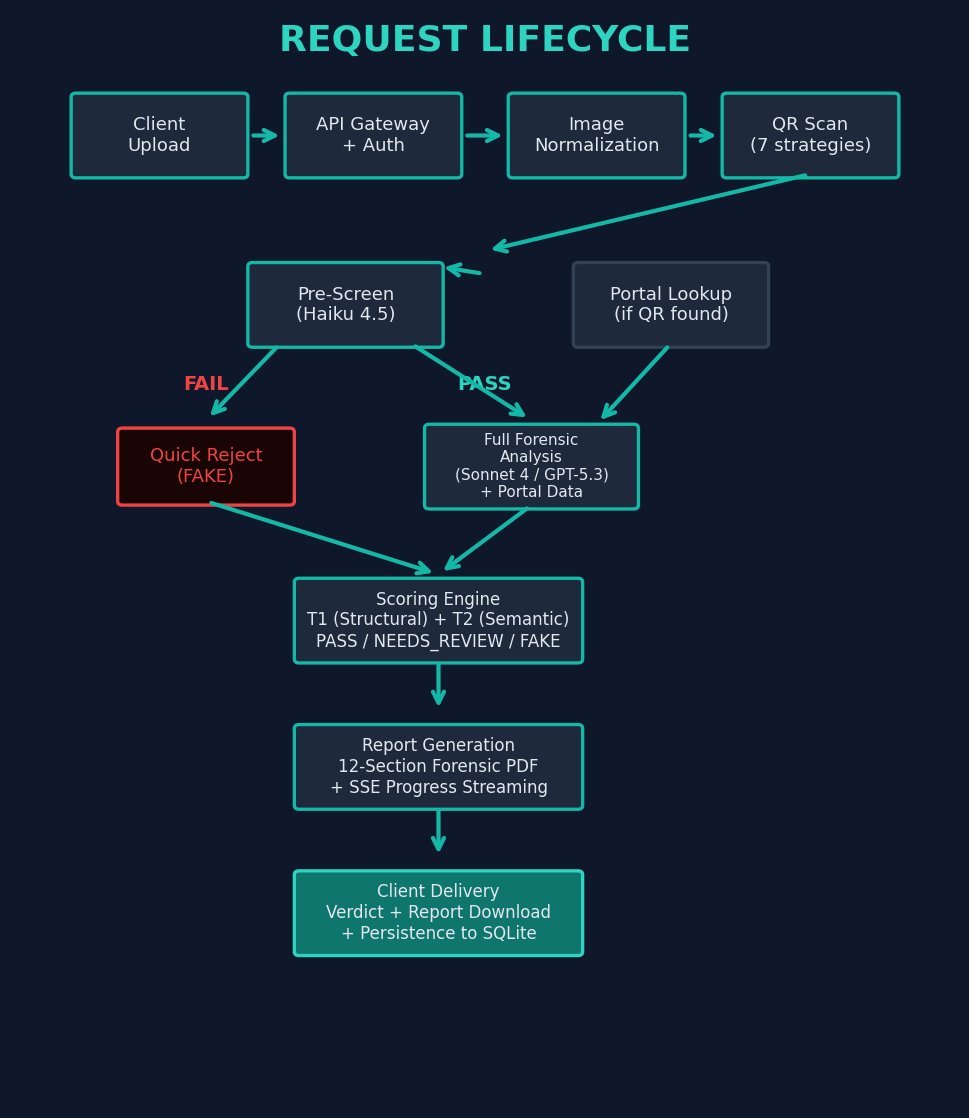

3.2 Request Lifecycle

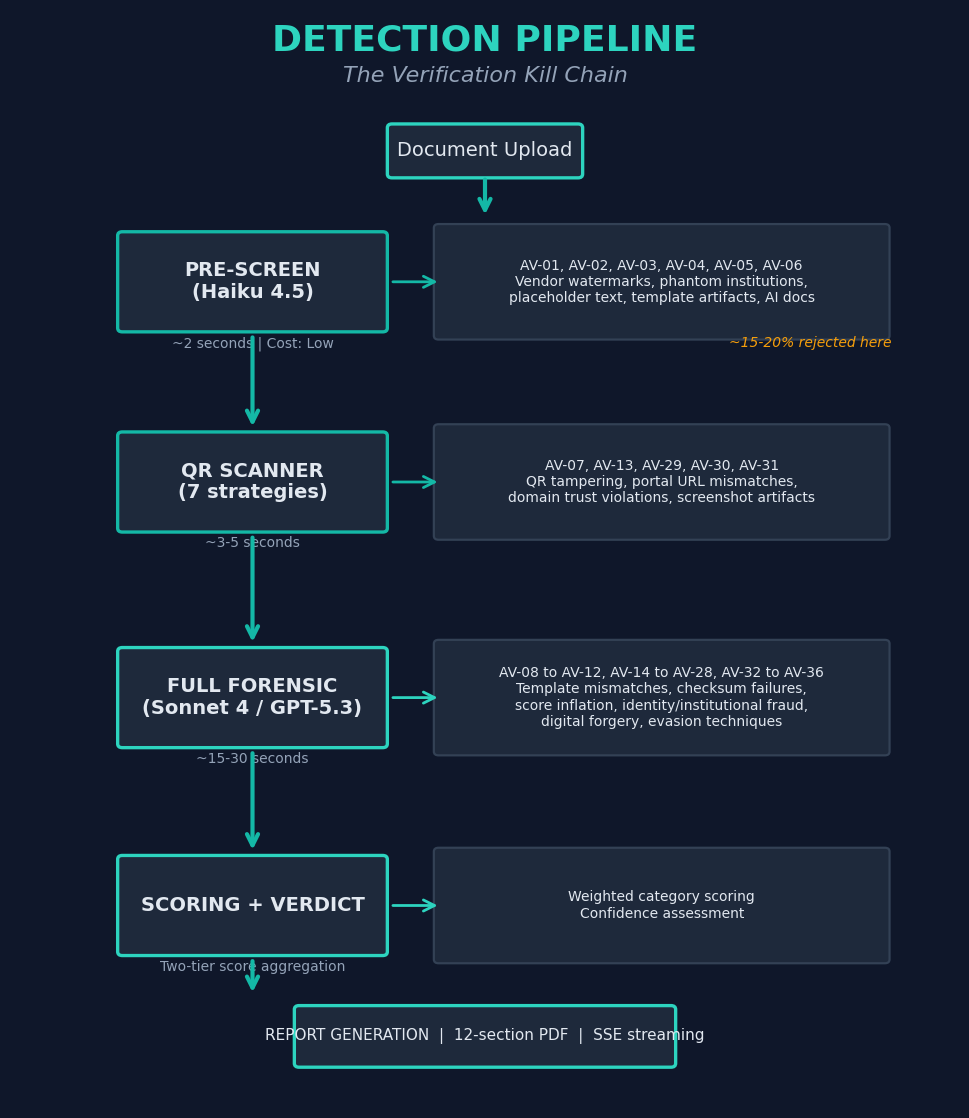

A document verification request traverses the following stages from submission to verdict delivery:

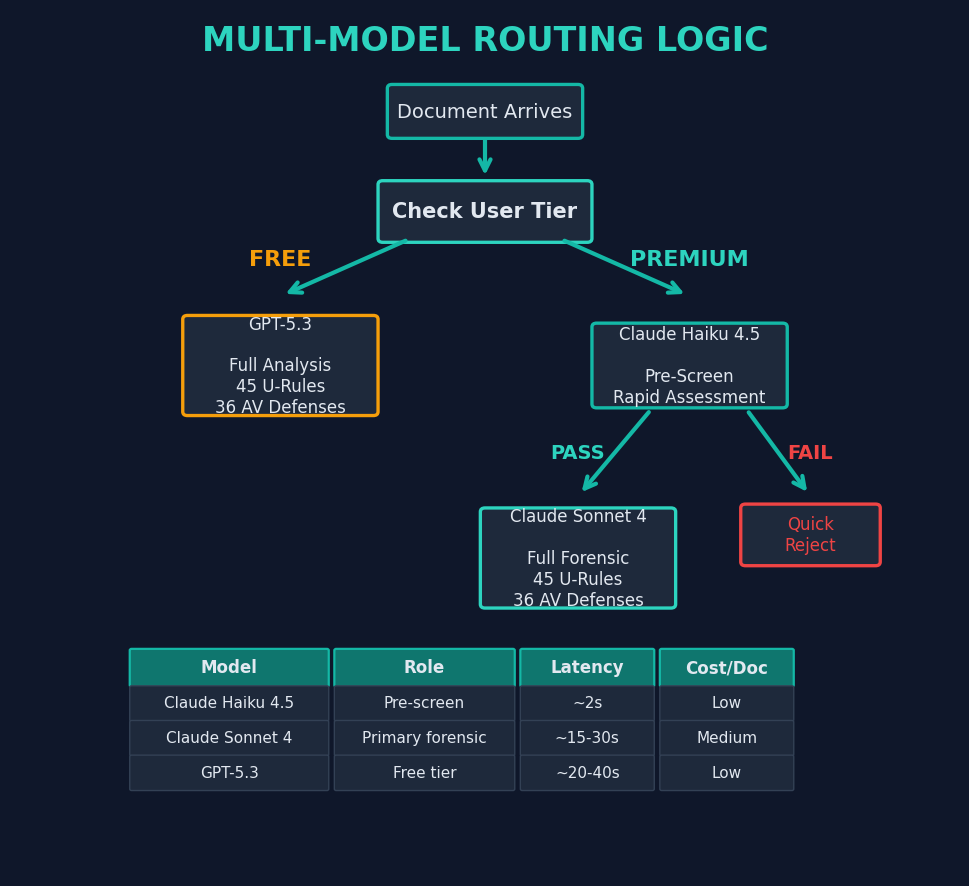

3.3 Multi-Model Routing

The platform employs four AI models, each selected for specific characteristics that match different stages and tiers of the verification pipeline:

Lightweight triage model serves as the universal pre-screening stage applied to all verifications regardless of tier. Its low latency profile makes it suitable for rapid assessment of obvious indicators: vendor watermarks from known forgery mills, placeholder text, phantom institution names, and gross formatting anomalies. Documents flagged by the pre-screener receive an immediate rejection verdict without consuming the resources of a full forensic analysis, which materially reduces downstream load.

Primary forensic model serves as the analysis engine for standard verifications. Its advanced reasoning capabilities enable nuanced evaluation of layout consistency, semantic plausibility, cross-referencing against portal data, and detection of sophisticated attack vectors that require contextual understanding. Standard verification processes 8 analysis stages.

Free-tier forensic model provides full forensic analysis for free-tier users, applying the same 45 U-rules and 36 AV-defenses across 8 analysis stages.

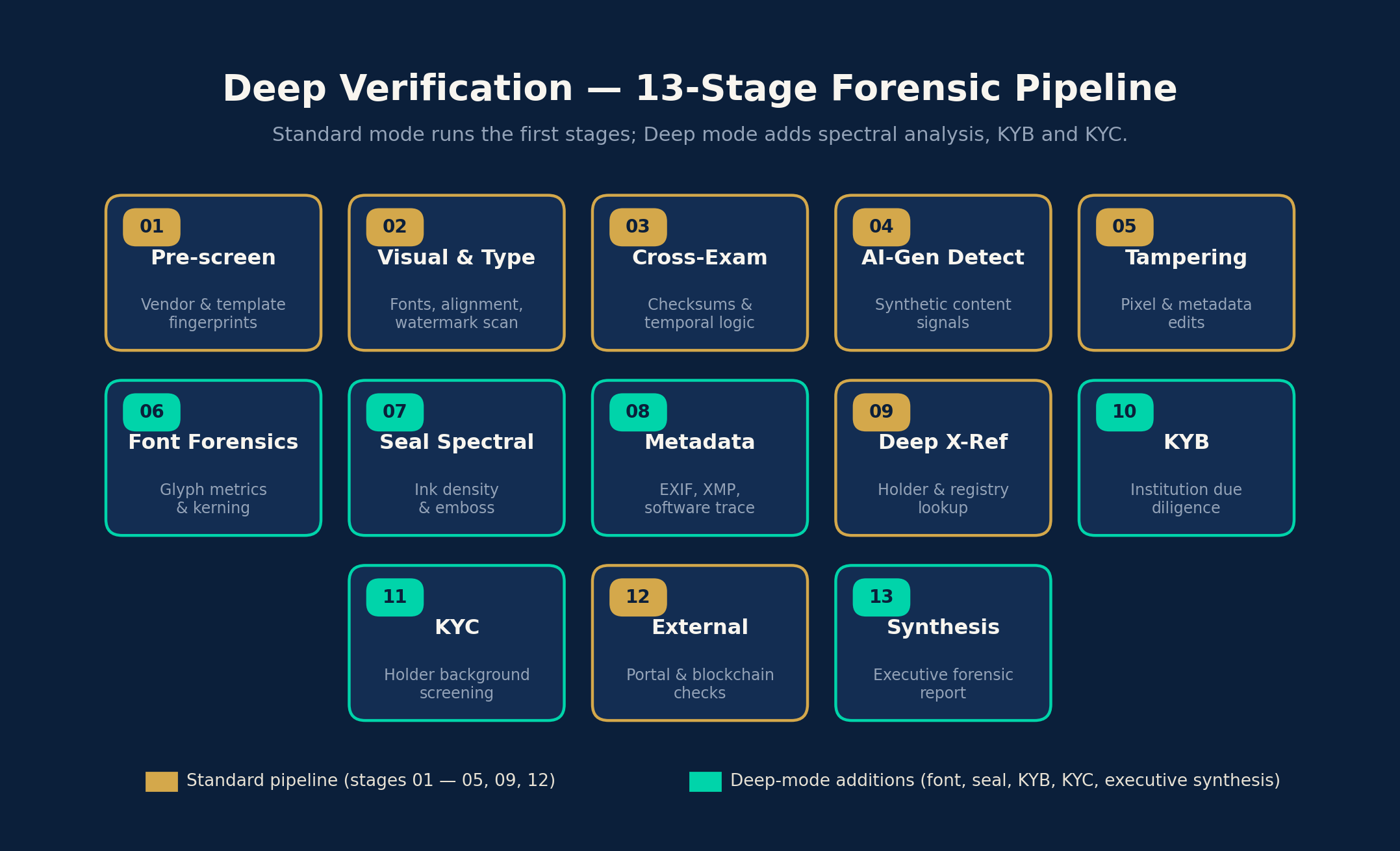

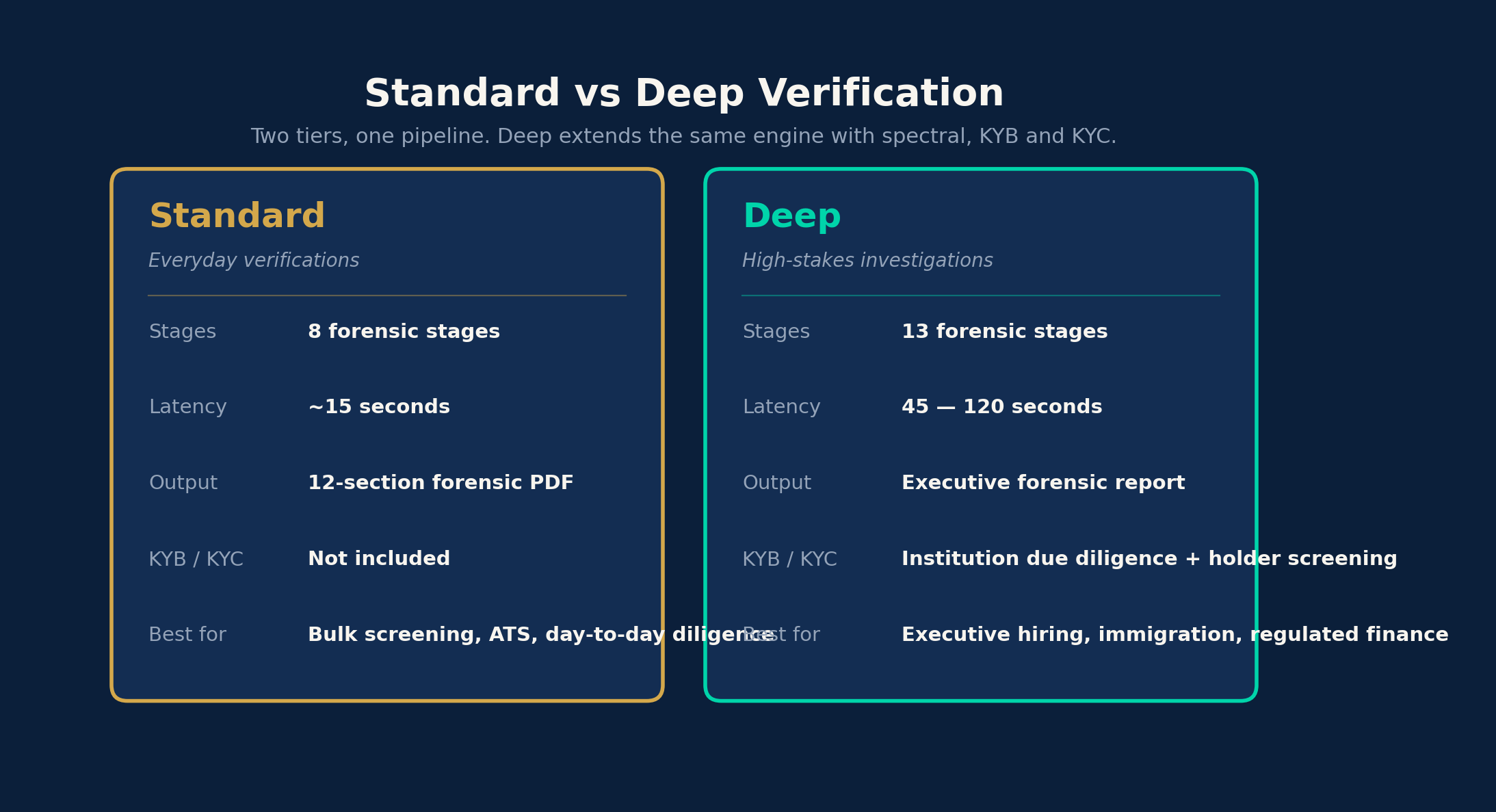

Deep verification engine powers the Deep Verification mode, a premium forensic investigation tier introduced in v2.0. The deep engine processes documents through 13 analysis stages (compared to 8 for standard), with 16,000 maximum output tokens for comprehensive forensic reports. Deep Verification includes specialized stages for font forensics, seal and watermark spectral analysis, institutional deep cross-referencing, KYB issuer due diligence, and KYC holder background screening. Each deep verification consumes 5 credits.

3.4 Technology Stack

The client layer is built on Next.js 16 with Turbopack for optimized build performance, supporting five locales (English, Traditional Chinese, Japanese, French, and Spanish). Server-Sent Events provide real-time progress streaming during document analysis, enabling users to observe the verification pipeline as it executes.

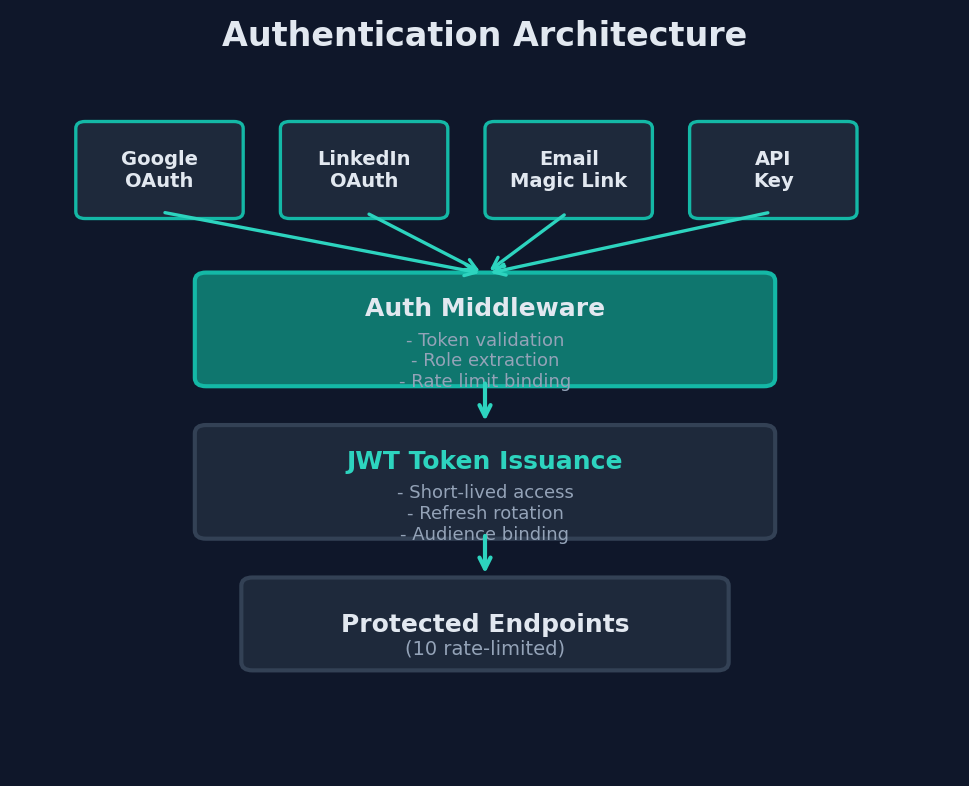

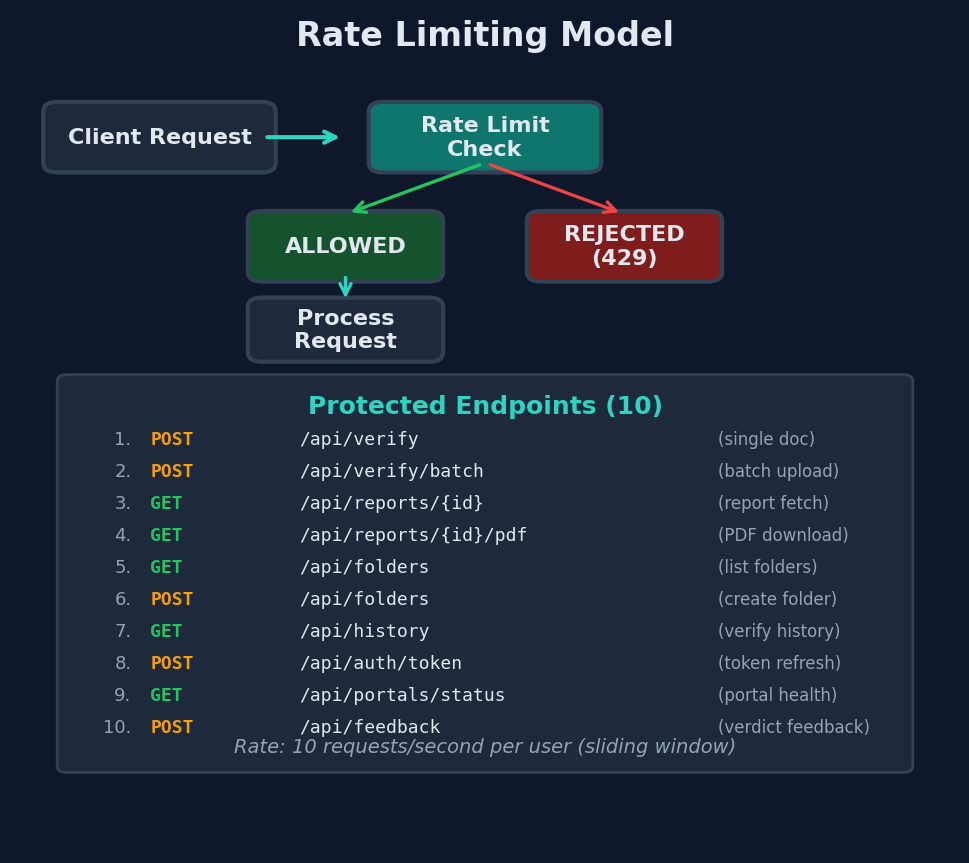

The API layer runs on FastAPI with full async/await support via Python 3.12+. Authentication supports four providers, and rate limiting enforces a maximum of 10 requests per second across 10 rate-limited endpoints. Request validation ensures that only properly formatted document images enter the processing pipeline.

The persistence layer uses SQLite accessed through aiosqlite for non-blocking database operations. This choice optimizes for deployment simplicity and single-node performance while maintaining ACID transaction guarantees for verification records.

4. Forensic Methodology

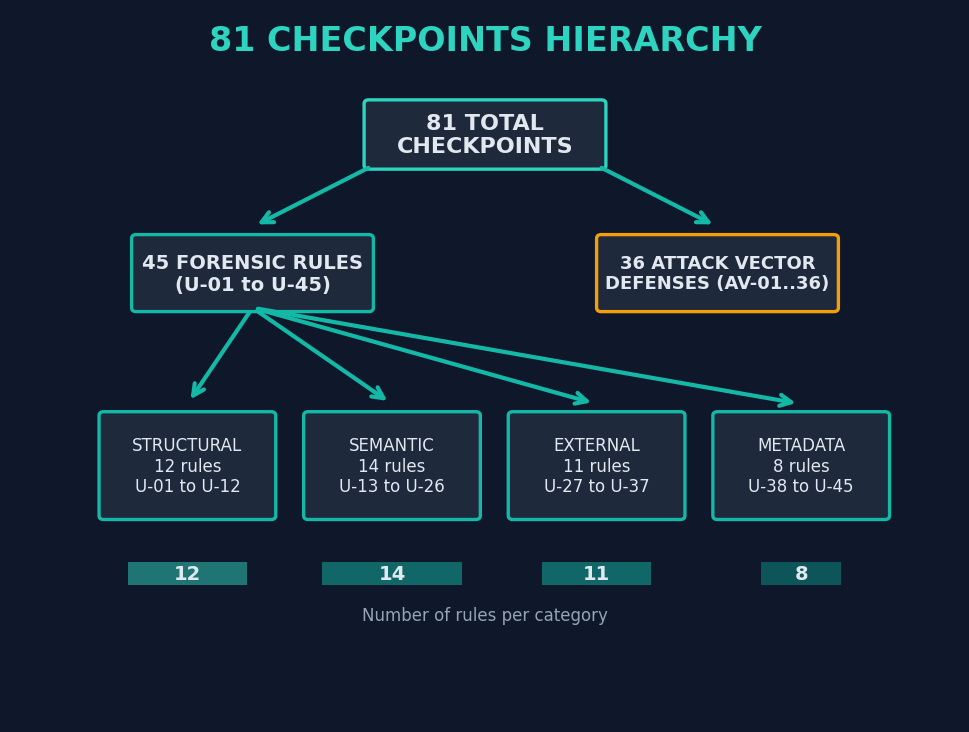

This section constitutes the technical core of the Turing Verify platform, detailing the checkpoint taxonomy, attack vector defense framework, scoring model, and calibration methodology.

4.1 Checkpoint Taxonomy

The 45 forensic rules (designated U-01 through U-45) are organized into four primary categories based on the dimension of document authenticity they evaluate.

4.1.1 Structural Rules (U-01 to U-12)

Structural rules evaluate the physical layout, formatting, and visual composition of the document. These rules detect anomalies in the tangible properties of the document image.

| Rule ID | Rule Name | Description |

|---|---|---|

| U-01 | Template Conformance | Evaluates whether the document layout matches known templates for the claimed issuing institution |

| U-02 | Font Consistency | Checks for unauthorized font changes, mixed font families, or anachronistic typefaces |

| U-03 | Alignment Integrity | Verifies that text blocks, borders, and graphical elements maintain consistent alignment |

| U-04 | Seal/Stamp Authenticity | Assesses institutional seals, stamps, and embossing for consistency with known specimens |

| U-05 | Signature Presence | Confirms the presence and plausibility of required signatures |

| U-06 | Paper/Background Uniformity | Evaluates background texture, color consistency, and absence of splicing artifacts |

| U-07 | Print Quality Assessment | Detects anomalies in print resolution, dot patterns, and toner distribution |

| U-08 | Border and Frame Integrity | Verifies decorative borders, frames, and security guilloche patterns |

| U-09 | Logo Fidelity | Compares institutional logos against known versions for proportional and chromatic accuracy |

| U-10 | Watermark Analysis | Evaluates watermark presence, positioning, and transparency characteristics |

| U-11 | Hologram/Security Feature Indicators | Assesses visual indicators of holographic or security printing features |

| U-12 | Image Resolution Consistency | Detects regions of inconsistent resolution that may indicate compositing |

4.1.2 Semantic Rules (U-13 to U-26)

Semantic rules evaluate the content, meaning, and logical coherence of information presented in the document.

| Rule ID | Rule Name | Description |

|---|---|---|

| U-13 | Date Plausibility | Verifies that all dates are logically consistent and chronologically valid |

| U-14 | Grade/Score Validity | Checks that grades, scores, and GPAs fall within valid ranges for the claimed institution |

| U-15 | Course Load Plausibility | Evaluates whether the number and distribution of courses is realistic |

| U-16 | Institutional Language | Assesses whether administrative language matches the claimed institution's conventions |

| U-17 | Credential Designation | Verifies that degree names, certificate titles, and credential designations are valid |

| U-18 | Name Consistency | Checks that the subject's name is consistent across all instances within the document |

| U-19 | Address/Location Validity | Verifies that addresses, cities, and jurisdictions are geographically plausible |

| U-20 | Registration/ID Number Format | Validates that registration and student ID numbers conform to known formats |

| U-21 | Grading System Consistency | Ensures the grading scale used is consistent throughout and matches institutional norms |

| U-22 | Credit Hour Validation | Verifies credit hours or units against institutional standards |

| U-23 | Cumulative Calculation Accuracy | Recalculates GPAs, weighted averages, and totals for mathematical accuracy |

| U-24 | Signatory Title Plausibility | Checks that signatory titles match plausible administrative positions |

| U-25 | Language and Grammar | Evaluates text for grammatical anomalies inconsistent with institutional quality |

| U-26 | Content Completeness | Assesses whether all expected sections and fields are present for the document type |

4.1.3 External Verification Rules (U-27 to U-37)

External rules leverage data obtained from sources outside the document itself, including QR codes, verification portals, and public databases.

| Rule ID | Rule Name | Description |

|---|---|---|

| U-27 | QR Code Presence | Determines whether the document contains an embedded QR code |

| U-28 | QR Data Extraction | Evaluates whether QR code data can be successfully decoded |

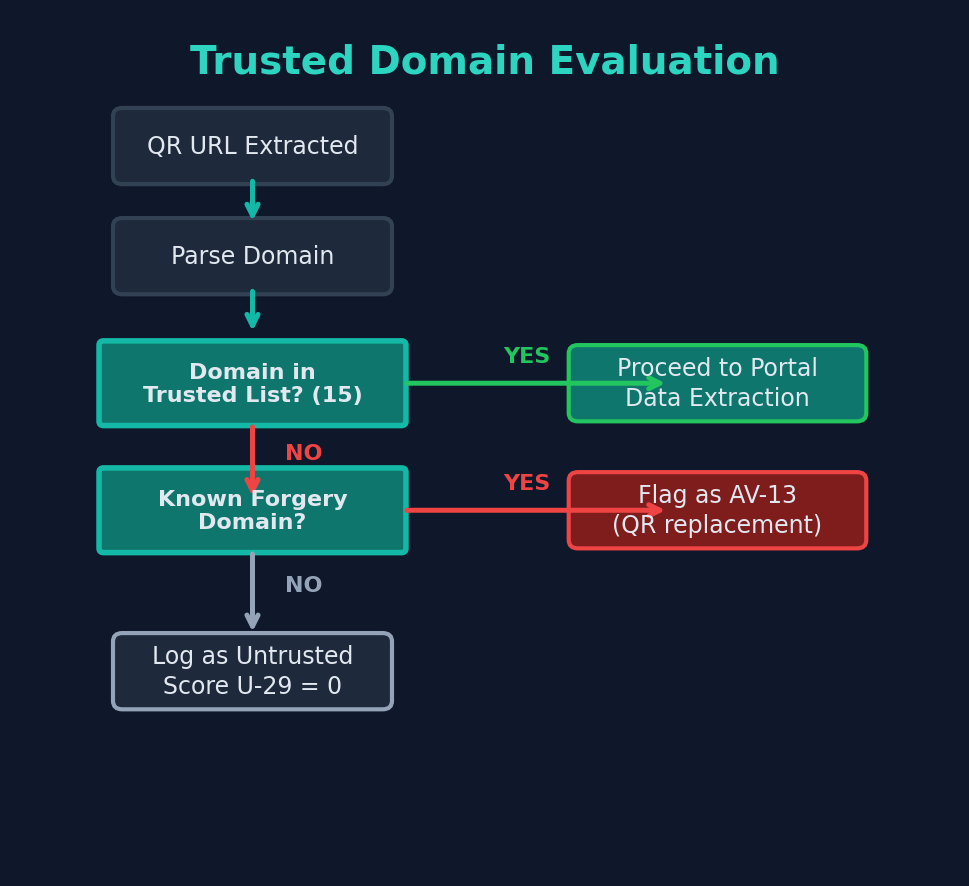

| U-29 | QR Domain Trust | Verifies that QR code URLs point to trusted verification domains |

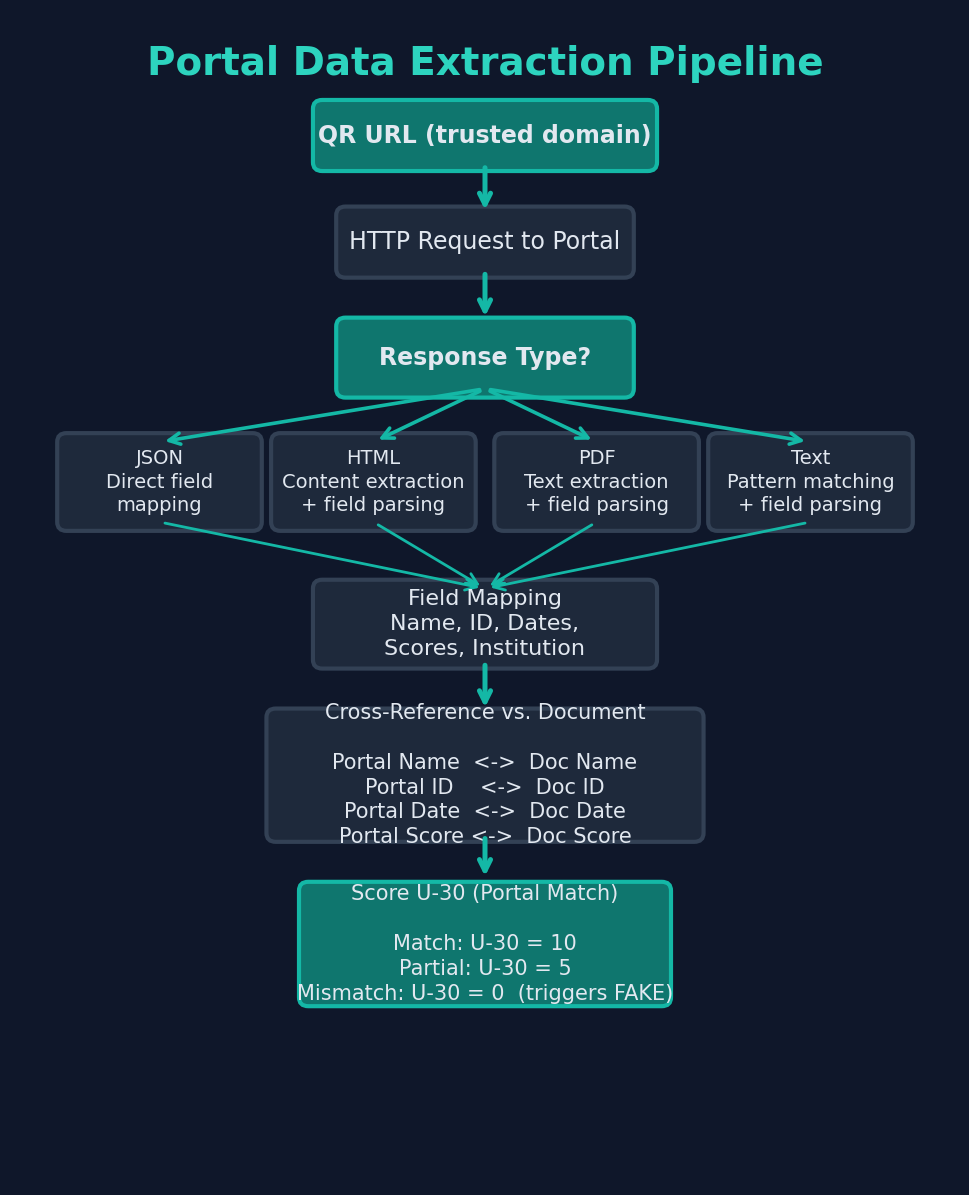

| U-30 | Portal Data Match | Cross-references document content against data retrieved from verification portals |

| U-31 | Portal Existence Verification | Confirms that the claimed verification portal exists and is operational |

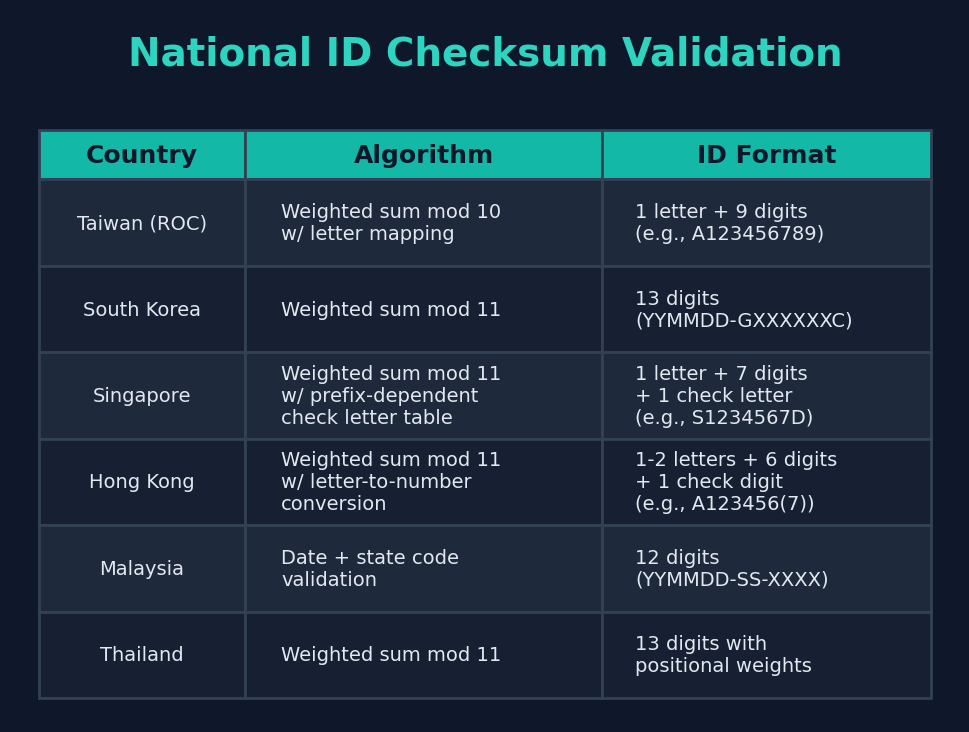

| U-32 | National ID Checksum | Validates national ID numbers against country-specific checksum algorithms |

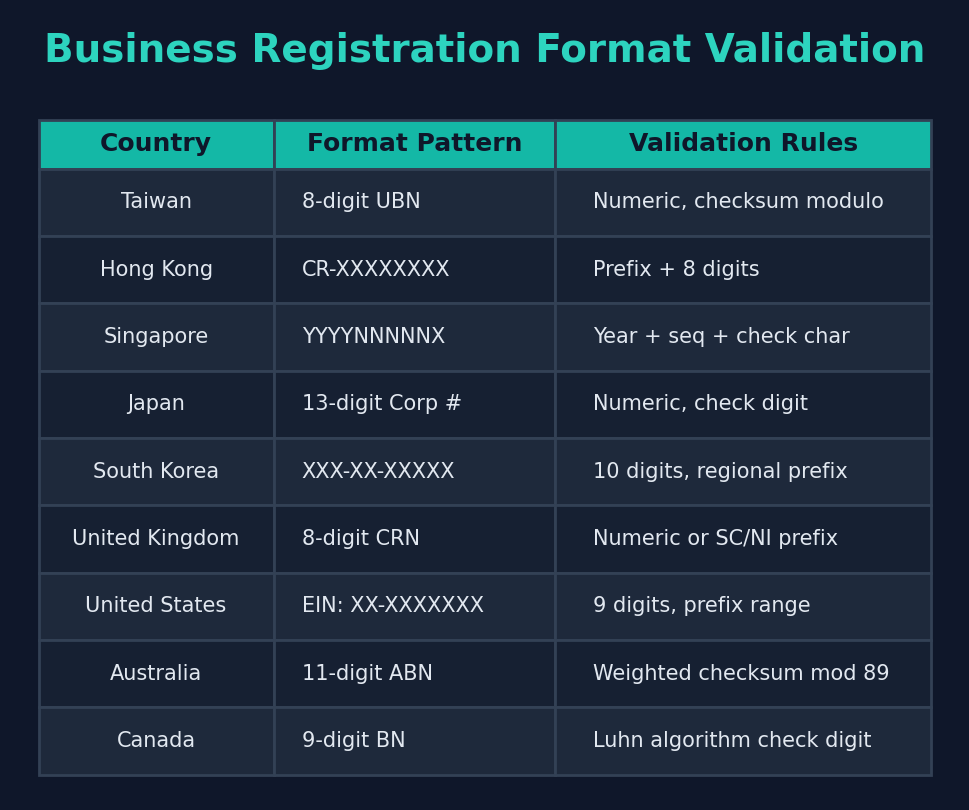

| U-33 | Business Registration Format | Verifies business registration numbers against country-specific format rules |

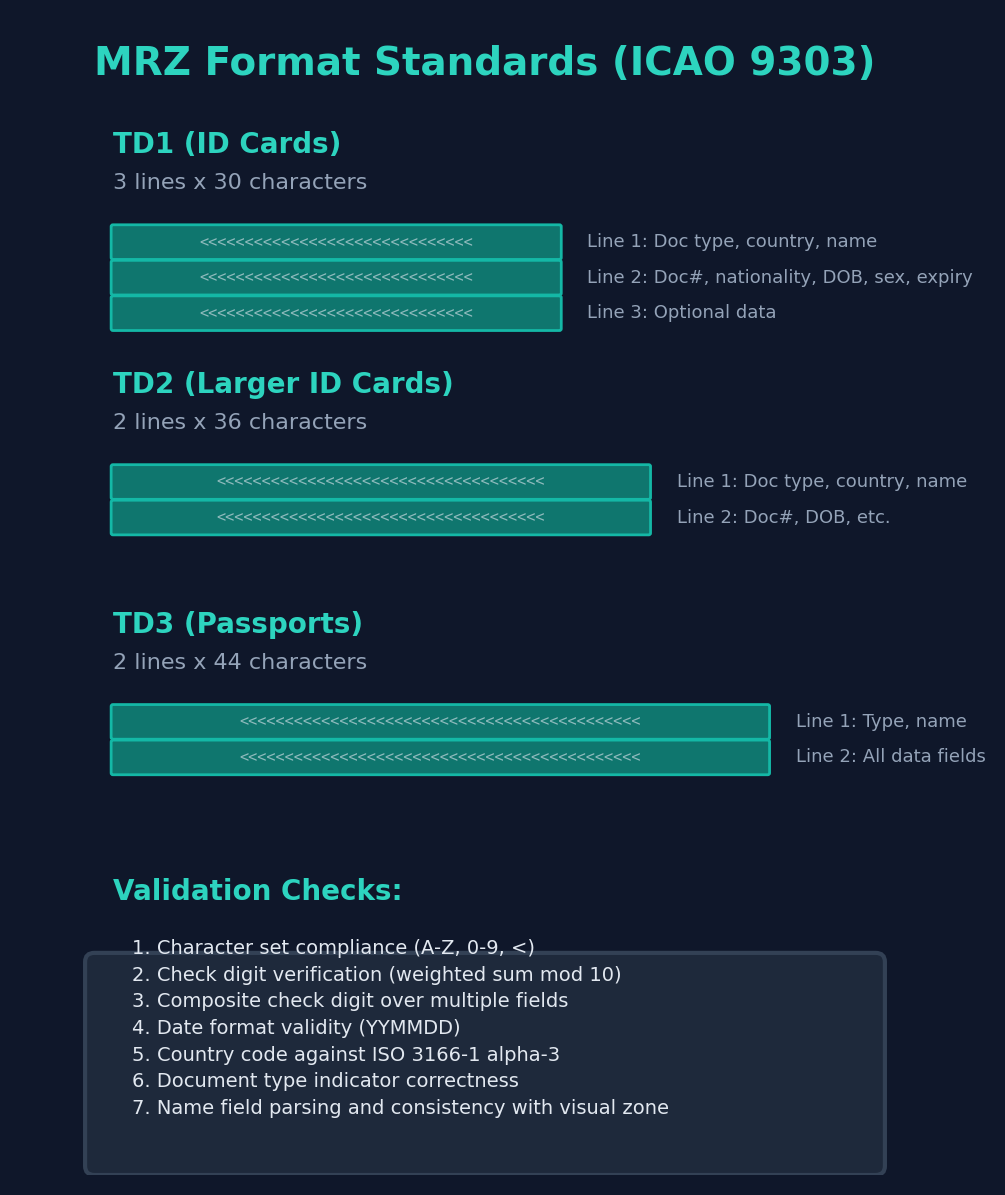

| U-34 | MRZ Validation | Parses and validates Machine Readable Zone data per ICAO 9303 standards |

| U-35 | Institution Existence | Confirms that the claimed issuing institution exists in reference databases |

| U-36 | Accreditation Status | Verifies the accreditation status of educational institutions where applicable |

| U-37 | Document Number Cross-Reference | Cross-references document serial numbers against portal records |

4.1.4 Metadata Rules (U-38 to U-45)

Metadata rules evaluate properties of the document image file itself, detecting traces of digital manipulation.

| Rule ID | Rule Name | Description |

|---|---|---|

| U-38 | EXIF Data Analysis | Examines image metadata for creation tools, timestamps, and device information |

| U-39 | Compression Artifact Analysis | Detects inconsistent JPEG compression levels indicating compositing |

| U-40 | Color Space Consistency | Evaluates color profiles and gamut for uniformity across the document |

| U-41 | Resolution Metadata Match | Compares declared resolution against actual pixel density |

| U-42 | Creation Tool Detection | Identifies software signatures in metadata (e.g., Photoshop, GIMP) |

| U-43 | Modification History | Examines metadata for evidence of multiple editing sessions |

| U-44 | Embedded Object Analysis | Detects hidden layers, embedded objects, or XMP data anomalies |

| U-45 | Digital Signature Verification | Validates cryptographic signatures if present in the document |

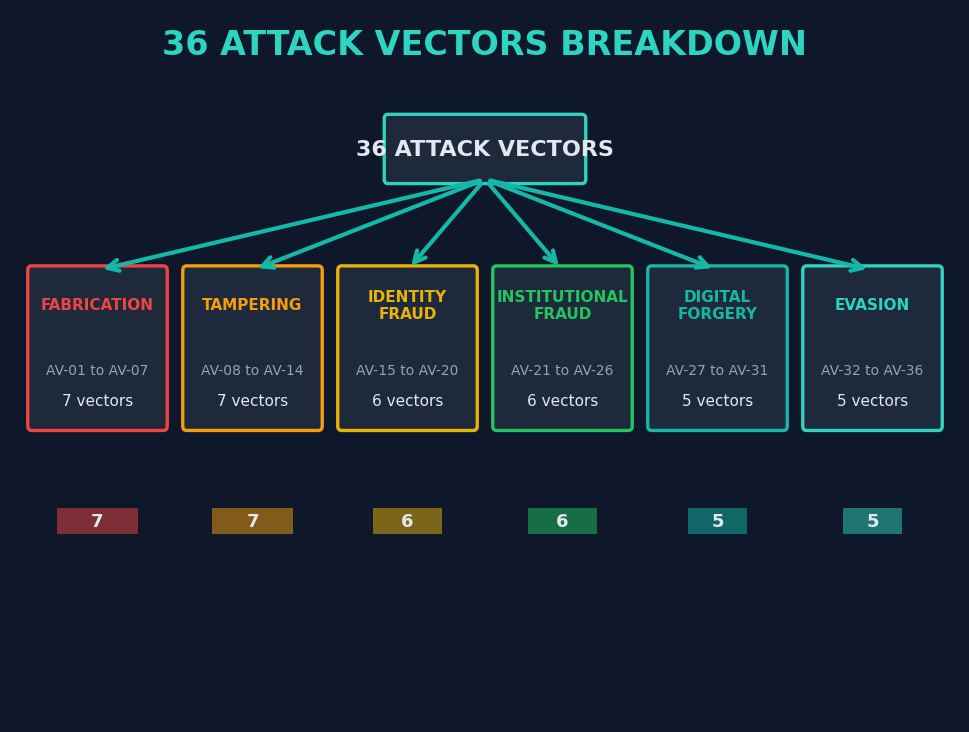

4.2 Attack Vector Defense

The 36 attack vectors (AV-01 through AV-36) represent specific forgery techniques that the system is designed to detect. These are organized into six families based on the nature of the attack.

Family 1: Fabrication (AV-01 to AV-07)

Fabrication attacks involve the creation of entirely fictitious documents. These range from crude attempts using online template generators to sophisticated AI-generated documents.

| AV ID | Attack Vector | Detection Method |

|---|---|---|

| AV-01 | Vendor watermark forgery | Pre-screen detects watermarks from known forgery mills (e.g., "SAMPLE", vendor URLs) |

| AV-02 | Phantom institution | Institution name checked against reference databases; non-existent institutions flagged |

| AV-03 | Placeholder text | Pre-screen detects lorem ipsum, template placeholder strings, and default values |

| AV-04 | Template generator artifacts | Known template generator layouts and styling patterns identified |

| AV-05 | AI-generated document | Detects statistical patterns characteristic of generative AI output |

| AV-06 | Stock image insertion | Identifies stock photography watermarks, metadata, and known image hashes |

| AV-07 | Blank template filling | Detects inconsistencies between pre-printed elements and filled-in content |

Family 2: Tampering (AV-08 to AV-14)

Tampering attacks modify legitimate documents to alter specific data fields while preserving the overall structure.

| AV ID | Attack Vector | Detection Method |

|---|---|---|

| AV-08 | Grade/score inflation | Mathematical recalculation of GPAs, totals, and weighted averages |

| AV-09 | Date alteration | Font analysis of date fields; chronological plausibility checks |

| AV-10 | Name substitution | Cross-reference name across all document instances; font consistency in name fields |

| AV-11 | Photo replacement | Resolution and compression analysis of photo region vs. document body |

| AV-12 | Seal/stamp overlay | Seal positioning, layering artifacts, and chromatic consistency analysis |

| AV-13 | QR code replacement | Comparison of QR-encoded data against visible document content |

| AV-14 | Selective content removal | Detection of blank regions inconsistent with expected template structure |

Family 3: Identity Fraud (AV-15 to AV-20)

Identity fraud attacks use documents that may be technically genuine but are presented by or attributed to the wrong individual.

| AV ID | Attack Vector | Detection Method |

|---|---|---|

| AV-15 | Name mismatch across documents | Cross-document consistency checking within applicant folders |

| AV-16 | ID number recycling | Duplicate detection across verification database |

| AV-17 | Photo-ID inconsistency | Cross-reference identity photos across documents in the same folder |

| AV-18 | Biographical data conflict | Age, birthdate, and timeline cross-validation |

| AV-19 | Nationality/jurisdiction mismatch | Geographic plausibility of claimed nationality vs. document origin |

| AV-20 | Alias exploitation | Name normalization across scripts and transliteration systems |

Family 4: Institutional Fraud (AV-21 to AV-26)

Institutional fraud involves documents from real institutions that have been manipulated or misrepresented.

| AV ID | Attack Vector | Detection Method |

|---|---|---|

| AV-21 | Accreditation misrepresentation | Accreditation status verification against authoritative databases |

| AV-22 | Defunct institution exploitation | Institution operational status verification |

| AV-23 | Program/degree fabrication | Verification that the claimed program exists at the claimed institution |

| AV-24 | Template version anachronism | Document template style compared against known historical versions |

| AV-25 | Signatory impersonation | Signatory names and titles cross-referenced where possible |

| AV-26 | Campus/branch misattribution | Verification of campus-specific details and formatting |

Family 5: Digital Forgery (AV-27 to AV-31)

Digital forgery attacks exploit the digital medium itself, manipulating image properties and metadata.

| AV ID | Attack Vector | Detection Method |

|---|---|---|

| AV-27 | Layer compositing | Compression artifact analysis revealing multiple editing stages |

| AV-28 | Color space manipulation | Color profile consistency analysis across document regions |

| AV-29 | Resolution stitching | Detection of resolution boundaries within a single document image |

| AV-30 | Metadata spoofing | Cross-validation of metadata claims against image properties |

| AV-31 | Screenshot-of-printout attack | Detection of screen artifacts, moire patterns, and perspective distortion |

Family 6: Evasion (AV-32 to AV-36)

Evasion attacks are designed specifically to circumvent automated verification systems.

| AV ID | Attack Vector | Detection Method |

|---|---|---|

| AV-32 | Deliberate image degradation | Quality assessment relative to expected norms for the document type |

| AV-33 | Partial document submission | Completeness checks against expected sections for the document type |

| AV-34 | Non-standard orientation | Orientation detection and normalization during pre-processing |

| AV-35 | Embedded steganographic data | Analysis of least-significant bit patterns in image data |

| AV-36 | Adversarial perturbation | Robustness checks against pixel-level perturbations designed to mislead AI models |

4.2.1 The Verification Kill Chain

The multi-stage pipeline distributes attack vector detection across layers, ensuring that each stage catches the attacks it is best positioned to identify.

4.3 Scoring Model

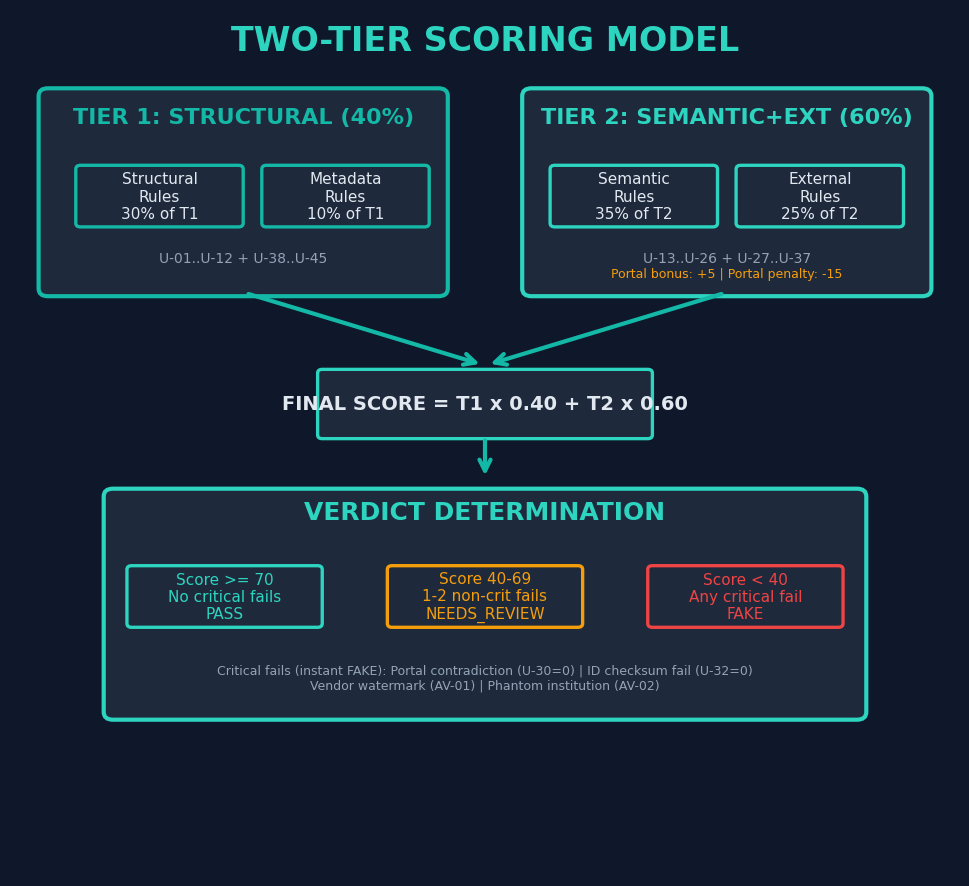

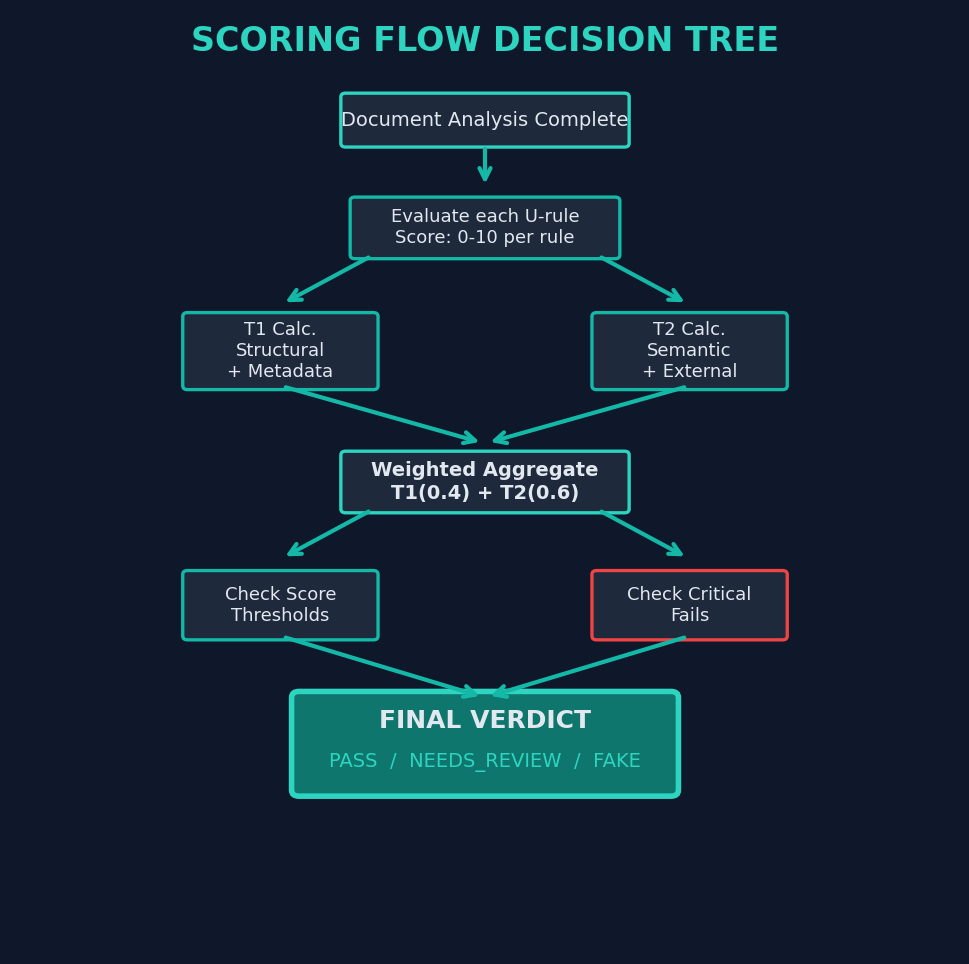

The Turing Verify employs a two-tier scoring model that separates structural assessment (Tier 1) from semantic and contextual assessment (Tier 2). This separation enables nuanced verdicts that distinguish between documents with formatting irregularities and documents with substantive content anomalies.

4.3.1 Scoring Flow

4.3.2 Attack Vector Overlay

In addition to the U-rule scoring, triggered attack vectors apply penalty modifiers to the final score. The magnitude of the penalty depends on the severity classification of the attack vector:

| Severity | Penalty | Examples |

|---|---|---|

| Critical | Instant FAKE verdict | AV-01 (vendor watermark), AV-02 (phantom institution) |

| High | -20 to -30 points | AV-08 (grade inflation), AV-13 (QR replacement) |

| Medium | -10 to -19 points | AV-09 (date alteration), AV-24 (template anachronism) |

| Low | -5 to -9 points | AV-32 (image degradation), AV-34 (non-standard orientation) |

4.4 Calibration and Ground Truth

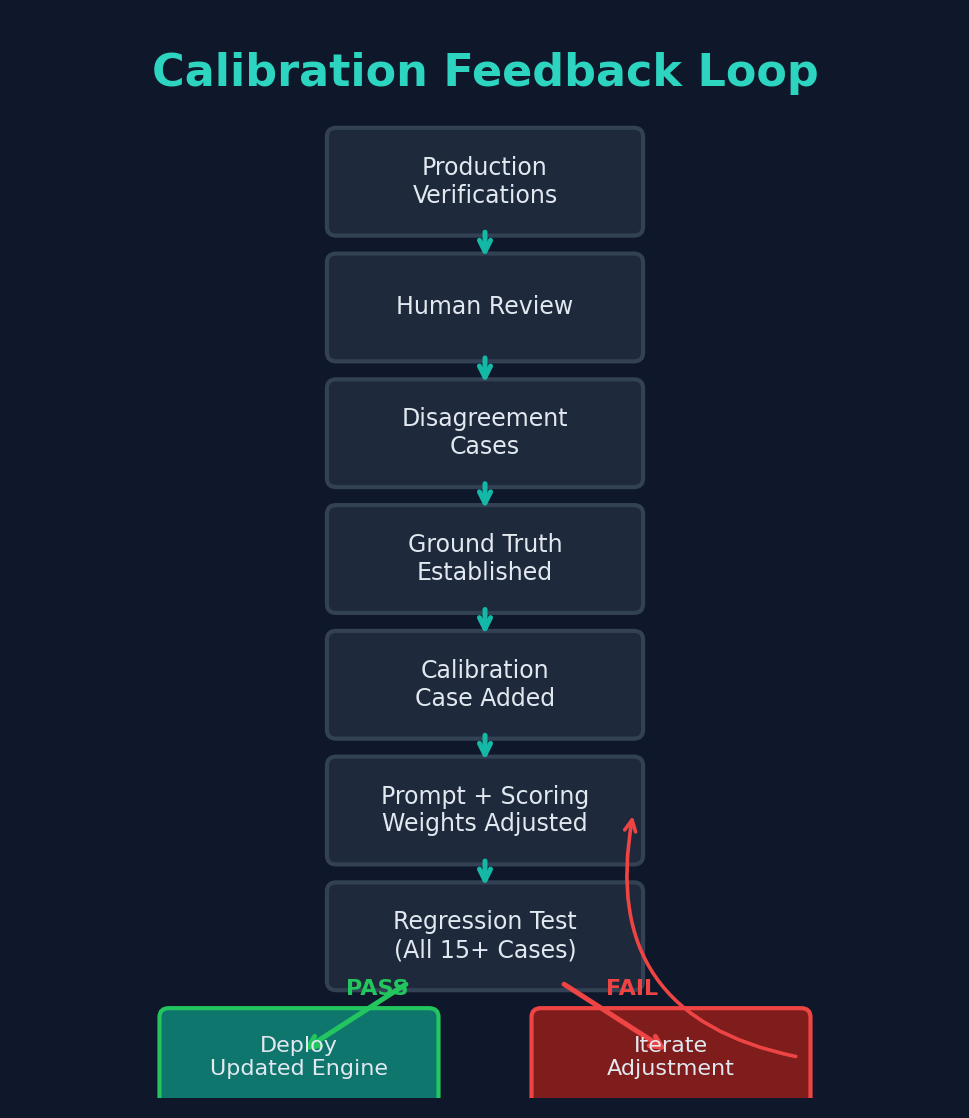

The forensic engine is calibrated against a set of 15 ground-truth cases that span the range of document types, attack vectors, and difficulty levels encountered in production. Each calibration case has a known authentic or fraudulent classification established through independent verification.

4.4.1 Continuous Calibration Feedback Loop

The calibration process is not a one-time activity. The system implements a continuous feedback loop that incorporates new ground-truth data as it becomes available.

4.4.2 Institution Template Library

The system maintains a library of 18 institution templates that encode layout specifications, expected formatting conventions, and security feature locations for frequently encountered issuing institutions. Templates are versioned to account for institutions that have changed their document designs over time. When a document claims to originate from a templated institution, the forensic analysis can perform pixel-level layout comparison in addition to general structural analysis, significantly increasing detection sensitivity for that institution's documents.

5. QR Code Verification Pipeline

5.1 Overview

An increasing number of credential-issuing institutions embed QR codes in their documents as a verification mechanism. These QR codes typically encode either a URL pointing to a verification portal or a data payload containing document details. When present, QR code data provides a high-confidence external reference point for verifying document authenticity.

However, QR codes in document images present significant technical challenges for extraction. Documents may be photographed at angles, scanned at varying resolutions, printed with ink that partially obscures the QR pattern, or degraded through photocopying. To address these challenges, Turing Verify implements a 7-strategy cascade scanner that applies progressively more aggressive image processing techniques.

5.2 Strategy Cascade

5.3 Trusted Domain Model

When a QR code is successfully decoded and contains a URL, the system evaluates that URL against a trusted domain allowlist comprising 15 verified domains belonging to legitimate verification portals. This trust model serves multiple purposes:

- Phishing prevention: Prevents the system from following URLs to malicious sites that could impersonate legitimate verification portals.

- Forgery detection: A QR code pointing to an untrusted domain strongly suggests document manipulation, as legitimate institutions use established verification infrastructure.

- Data quality assurance: Data extracted from trusted portals has higher reliability than data from unknown sources.

5.4 Portal Data Extraction Pipeline

When a QR code resolves to a trusted verification portal, the system extracts structured data from the portal response and cross-references it against the document content.

6. Country-Specific Validation

6.1 Overview

Document verification cannot be performed in a jurisdiction-agnostic manner. Each country has distinct conventions for national identification numbers, business registration formats, document layouts, and verification infrastructure. Turing Verify implements country-specific validation modules that apply jurisdiction-appropriate checks.

6.2 National ID Checksum Validation

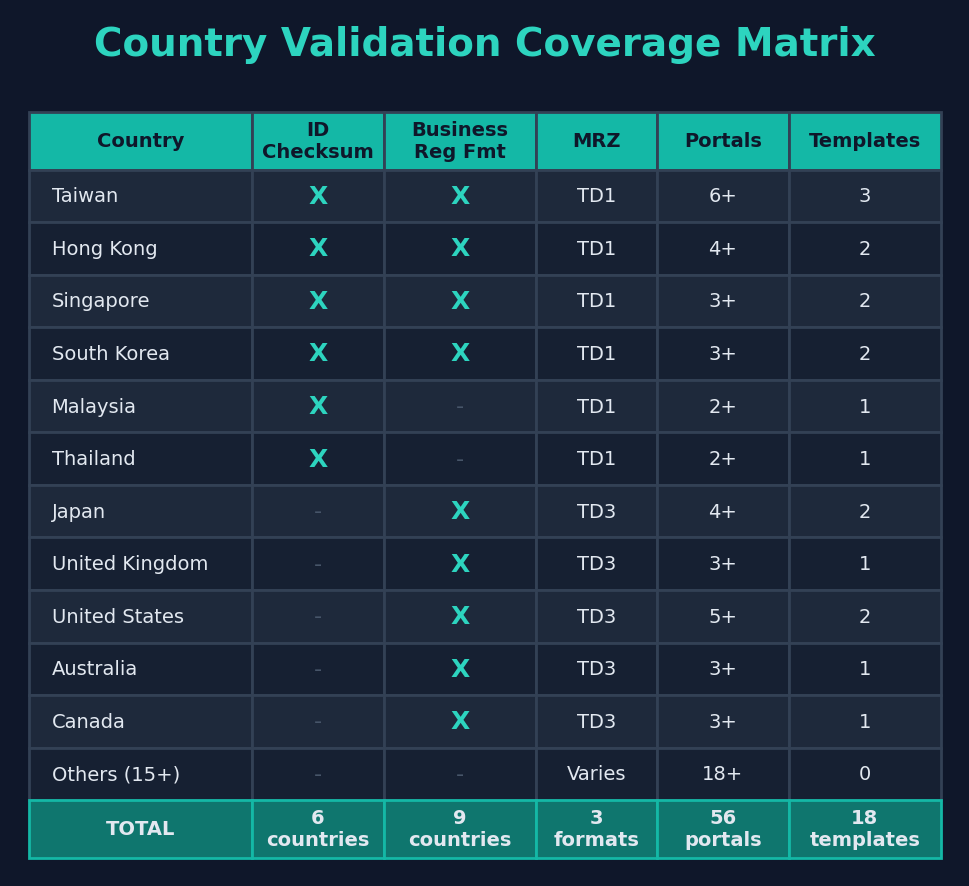

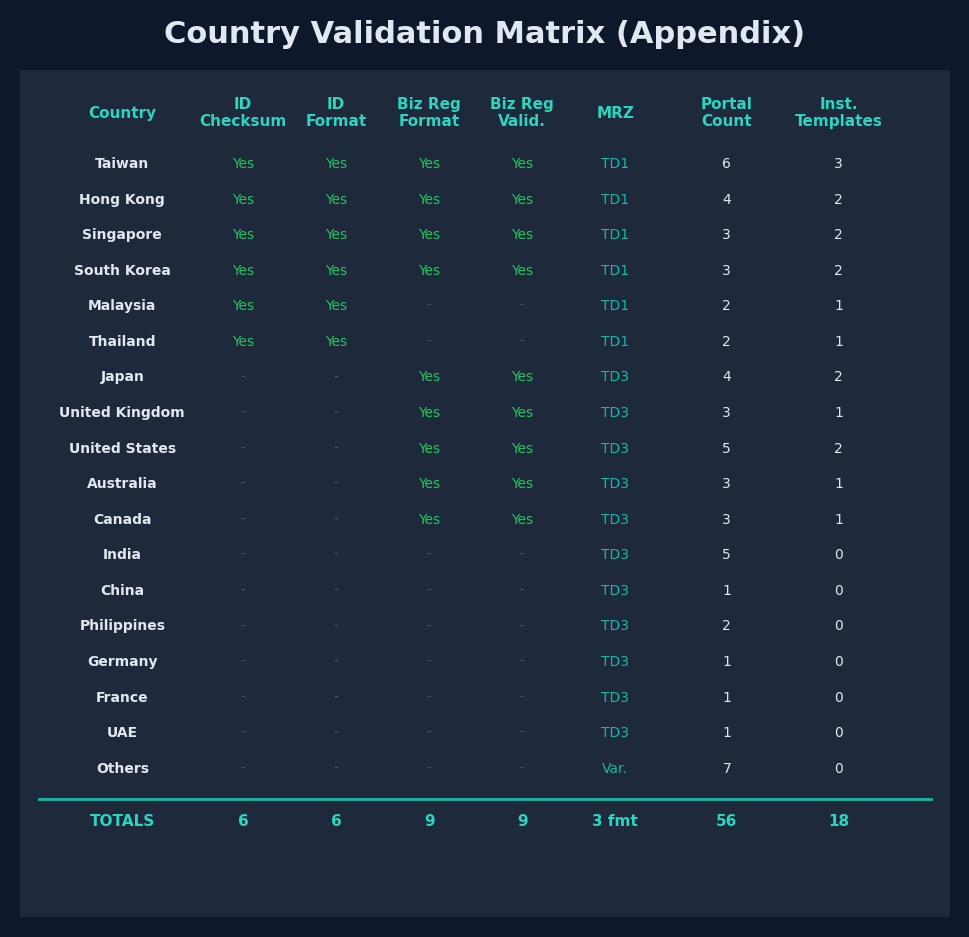

Six countries have implemented national ID systems with algorithmic checksums that enable mathematical verification of ID number validity. The Turing Verify implements checksum validation for each of these systems.

6.3 Business Registration Format Validation

Nine countries have sufficiently standardized business registration numbering systems to enable format validation. The system checks that registration numbers conform to the expected pattern for the claimed jurisdiction.

6.4 MRZ (Machine Readable Zone) Validation

Machine Readable Zones on travel documents and national IDs follow the ICAO 9303 standard, which defines three formats based on document type.

6.5 Country Coverage Matrix

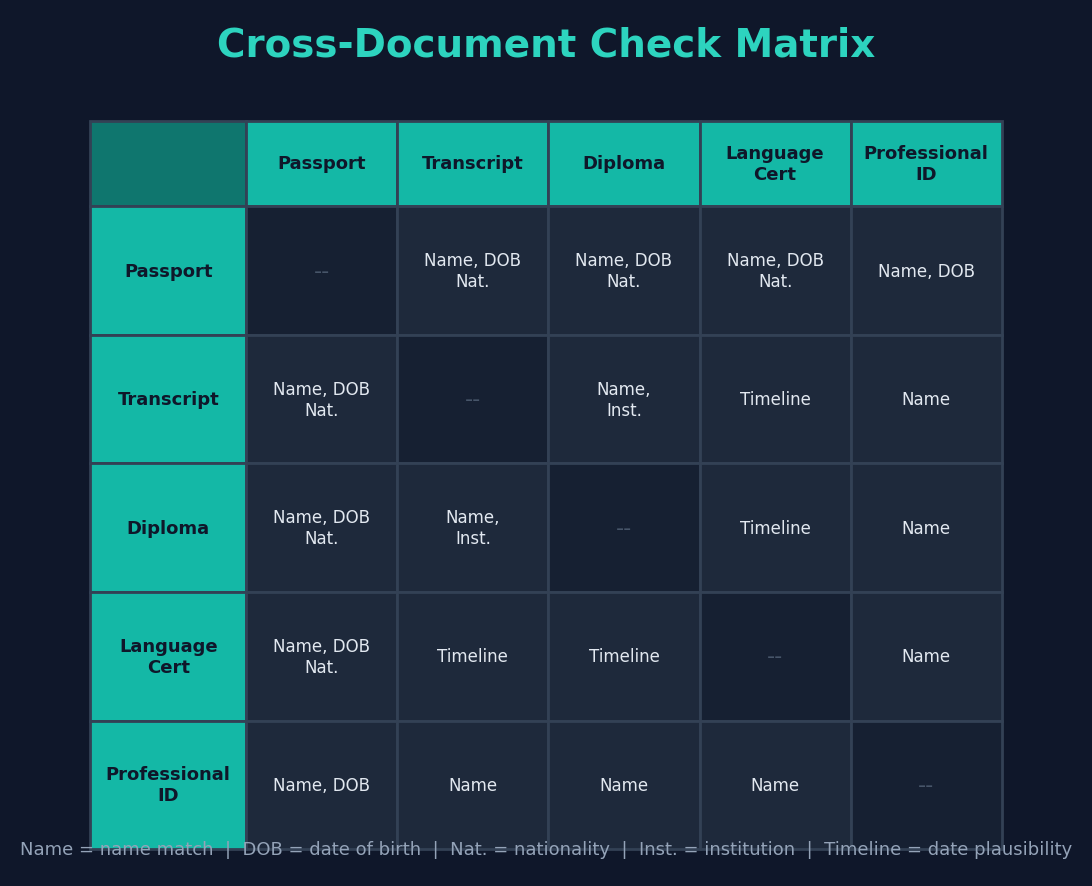

7. Cross-Document Consistency Checking

7.1 Applicant Folder Model

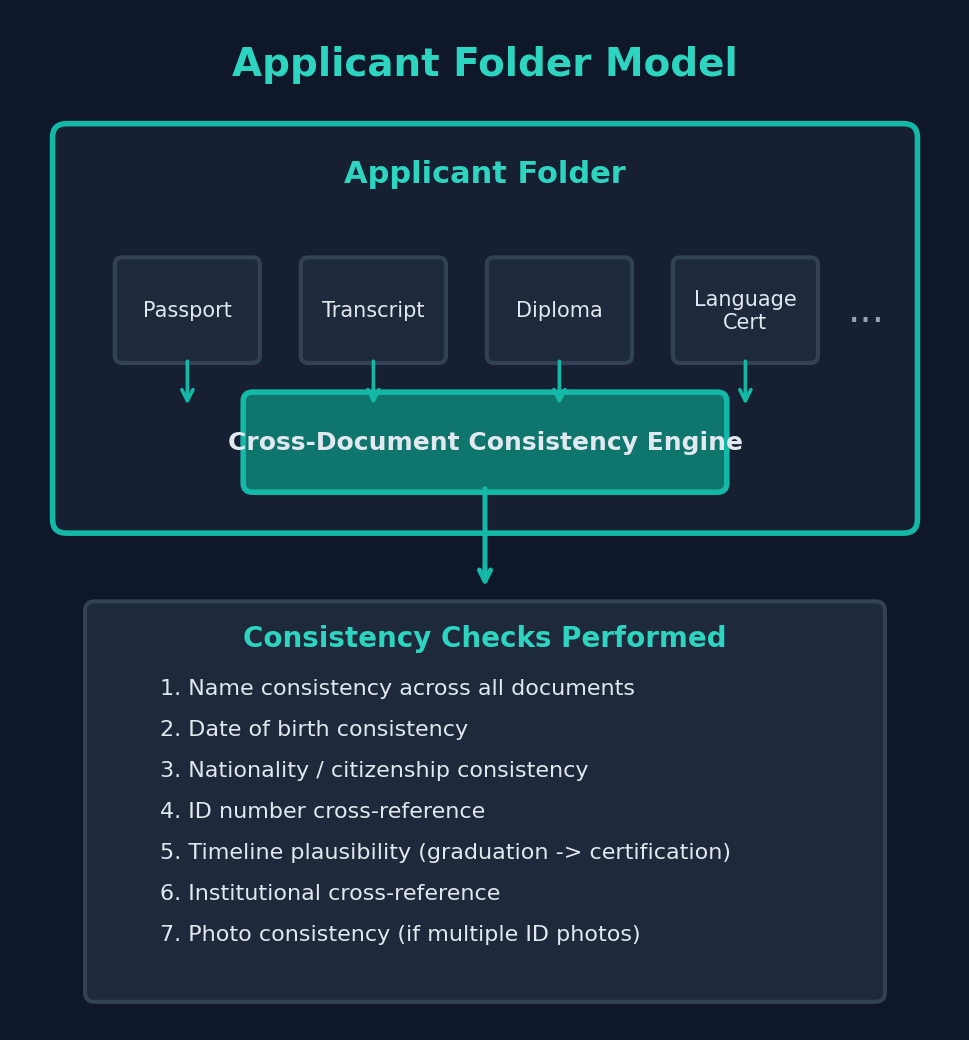

In many verification workflows, multiple documents are submitted by the same individual as part of a single application. An admissions office, for example, may receive a passport, academic transcript, diploma, and language proficiency certificate from a single applicant. Turing Verify organizes related documents into applicant folders, enabling cross-document consistency analysis that individual document verification cannot provide.

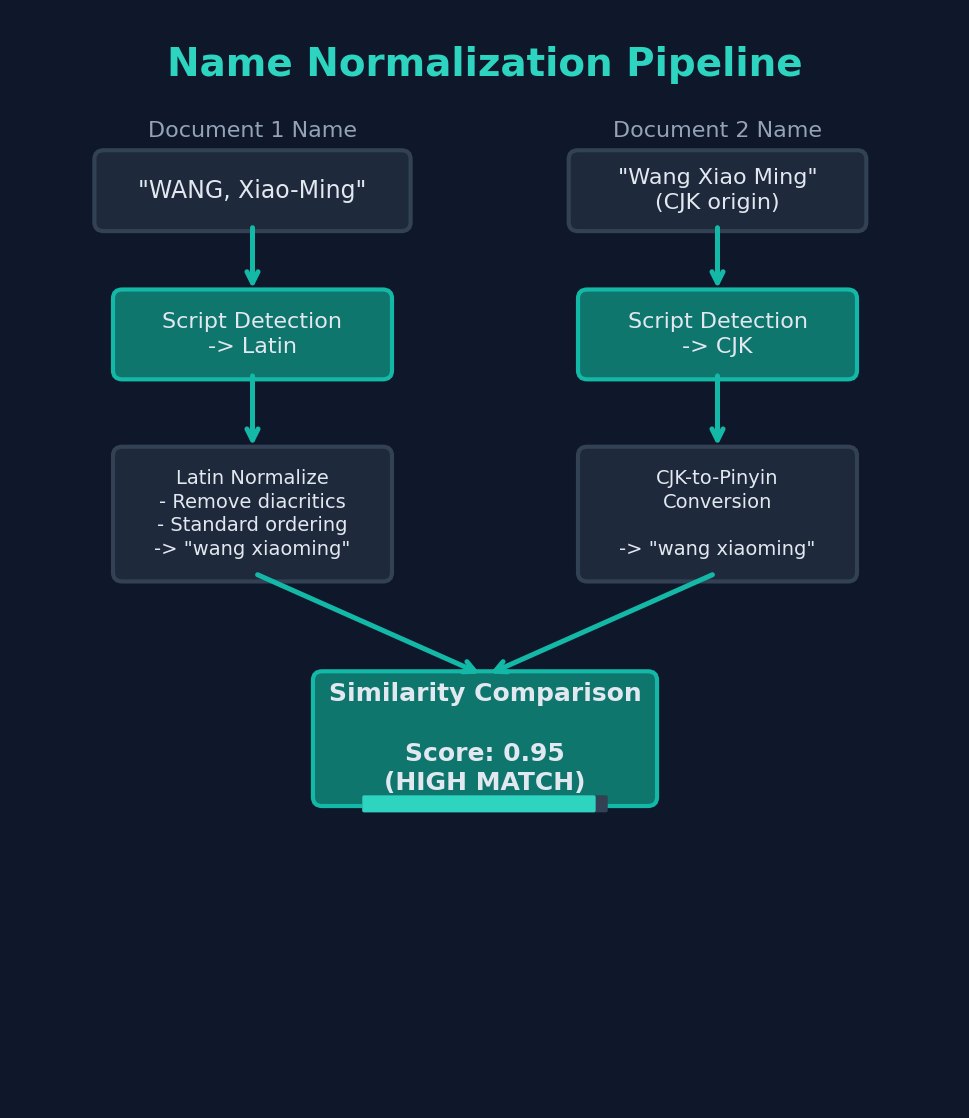

7.2 Name Normalization Across Scripts

A significant challenge in cross-document consistency checking is name matching across different scripts and transliteration systems. An applicant's name may appear in Latin script on a passport, in CJK characters on an academic transcript, and in a different romanization on a professional certificate.

The system implements multi-script name normalization that:

- Identifies the script of each name instance (Latin, CJK, Cyrillic, Arabic, Devanagari, etc.)

- Applies standard transliteration mappings between scripts

- Normalizes Latin-script names for common variations (diacritics, hyphenation, ordering)

- Computes similarity scores that account for expected transliteration variation

7.3 Cross-Document Check Matrix

The system performs pairwise checks between all documents in a folder, evaluating each applicable consistency dimension.

8. Verification Portal Integration

8.1 Portal Landscape

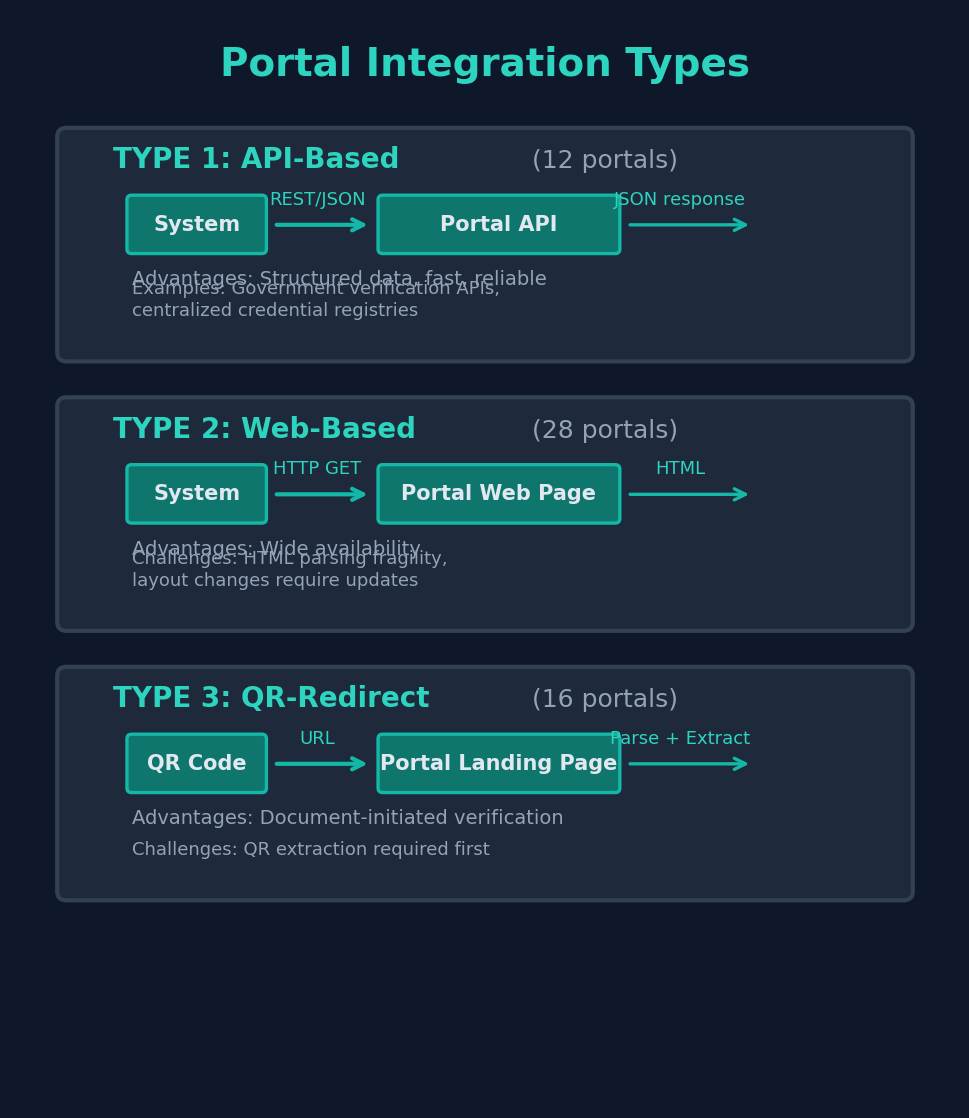

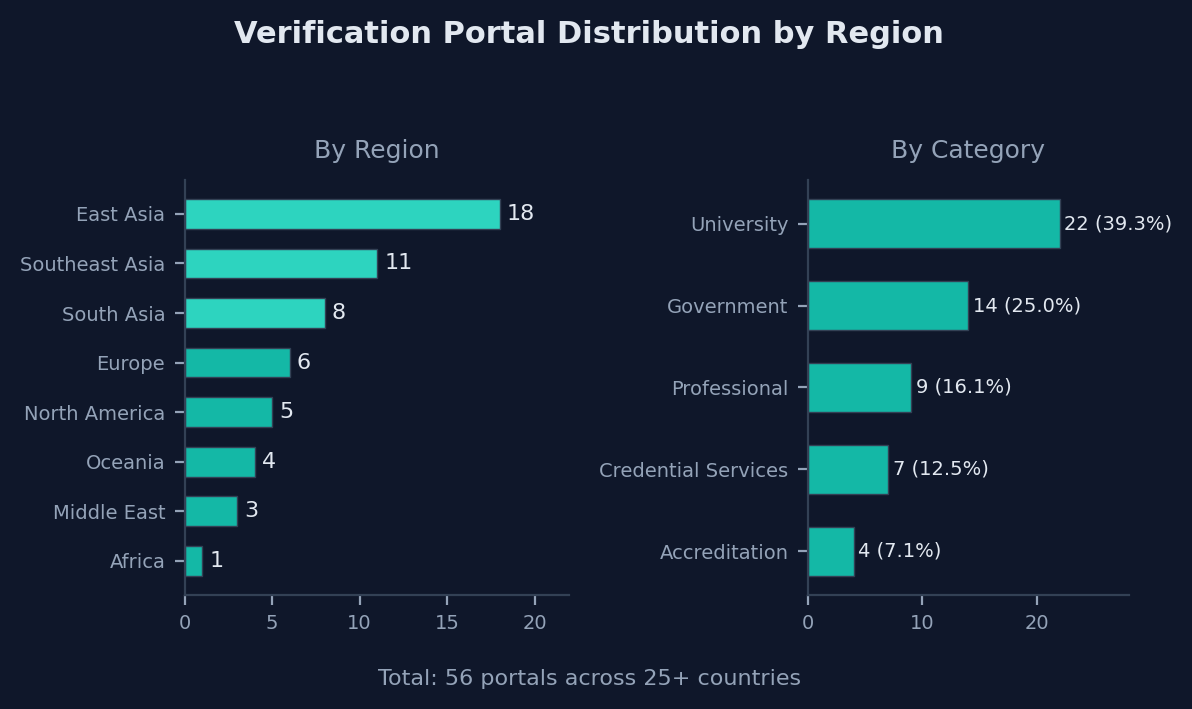

Turing Verify integrates with 56 verification portals operated by educational institutions, government agencies, professional certification bodies, and credential verification services across multiple countries. These portals represent the most reliable external data sources for confirming document authenticity.

8.2 Integration Types

Portal integrations fall into three categories based on the technical mechanism used to retrieve verification data.

8.3 Regional Distribution

8.4 Portal Data Reliability

Not all portal data carries equal weight in the verification process. The system classifies portal data reliability based on the portal operator and data freshness:

| Reliability Tier | Portal Type | Scoring Impact |

|---|---|---|

| Tier 1 (Highest) | Government-operated registries, direct institutional APIs | Full scoring weight; portal contradiction = critical fail |

| Tier 2 (High) | University-operated verification pages, centralized credential services | High scoring weight; contradiction = major penalty |

| Tier 3 (Moderate) | Third-party aggregators, professional body portals | Moderate weight; contradiction = flag for review |

9. Report Generation and User Experience

9.1 Forensic PDF Report Structure

Every verification produces a 12-section forensic PDF report that documents the analysis in sufficient detail to support institutional decision-making and auditing requirements.

9.2 SSE Streaming Timeline

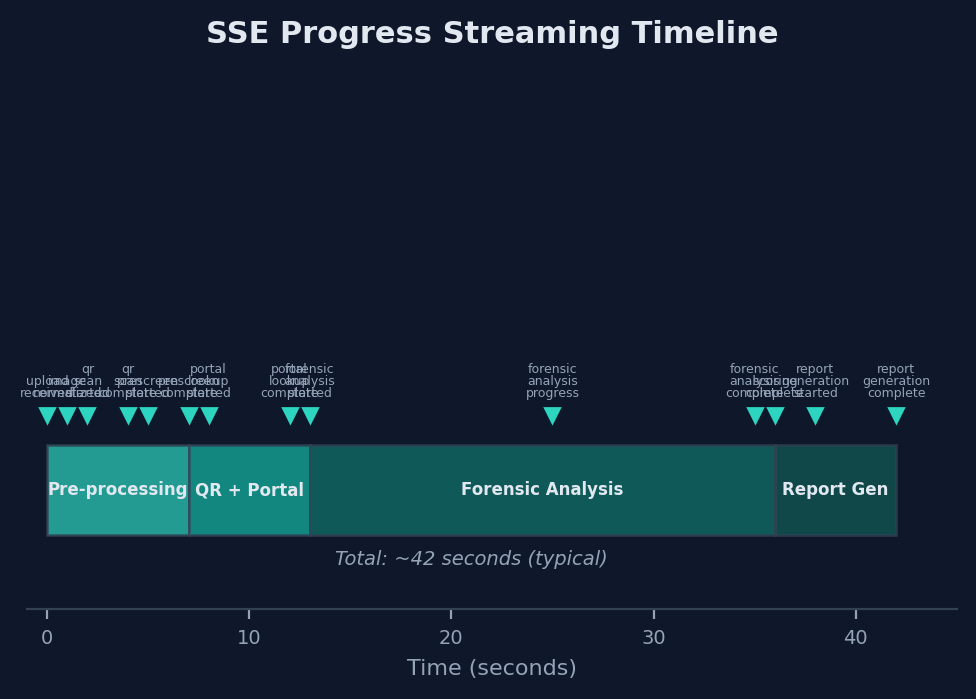

The verification process involves multiple stages that execute over a period of 15 to 45 seconds for a typical document. Rather than leaving users without feedback during processing, the system streams progress updates via Server-Sent Events (SSE).

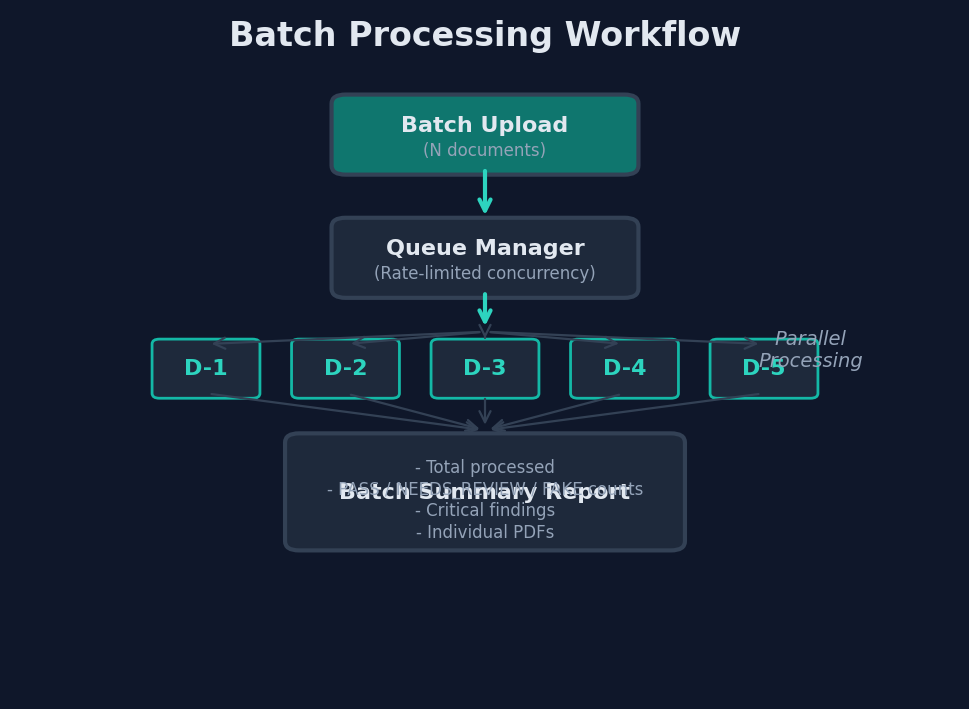

9.3 Batch Processing Workflow

For institutions processing large numbers of documents, Turing Verify supports batch upload with parallel processing and aggregated reporting.

10. Security and Privacy

10.1 Authentication Architecture

The platform implements a multi-provider authentication system supporting four identity providers. JSON Web Tokens (JWT) are used for session management across all authenticated endpoints.

10.2 Rate Limiting Model

Rate limiting is enforced at 10 requests per second per authenticated user across 10 protected endpoints. The rate limiter uses a sliding window algorithm that provides smooth throttling without the burst characteristics of fixed-window approaches.

10.3 Data Lifecycle

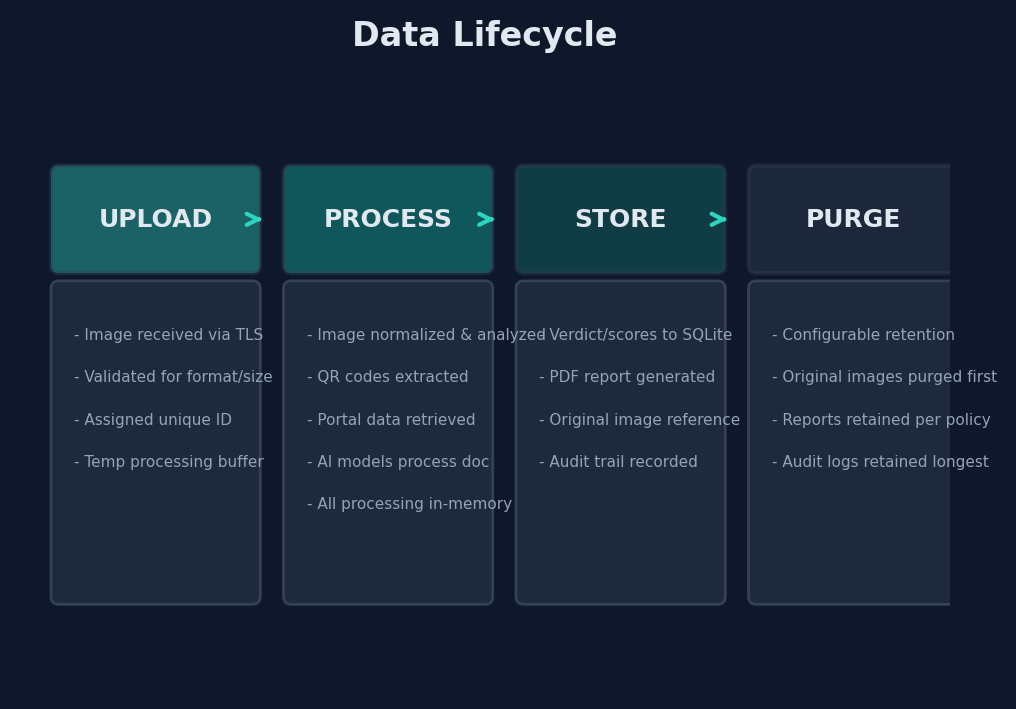

Document images and verification data follow a defined lifecycle that balances operational requirements with privacy considerations.

10.4 Privacy Considerations

The system is designed with several privacy safeguards:

- No biometric storage: The system does not extract, store, or process biometric data (fingerprints, facial recognition, iris patterns) from identity documents.

- Minimal data retention: Only verification results and metadata are retained long-term; original document images are purged according to configurable policies.

- No cross-user data sharing: Verification data from one user or institution is never shared with or visible to other users or institutions.

- Encrypted transit: All data in transit is encrypted via TLS. API communications with AI model providers are similarly encrypted.

- Audit trail: All verification actions are logged for compliance and accountability purposes.

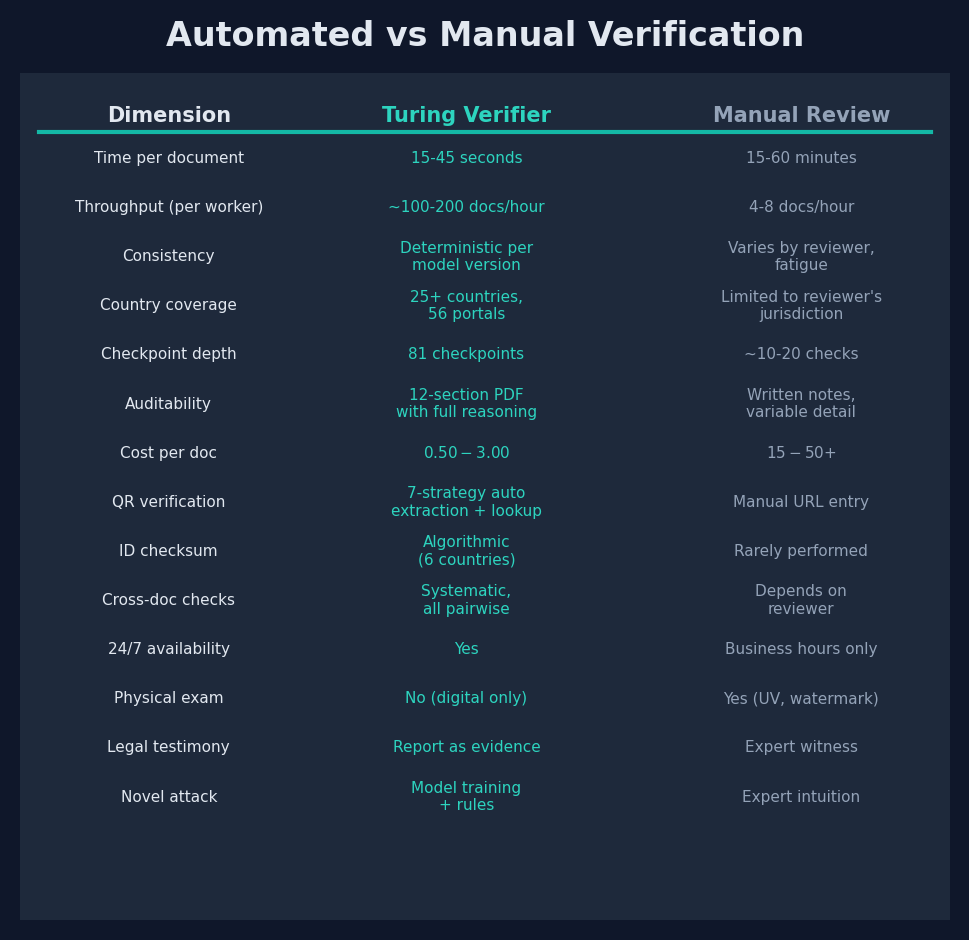

11. Comparative Analysis: Automated vs Manual Verification

11.1 Performance Dimensions

A structured comparison between Turing Verify's automated approach and traditional manual verification reveals distinct advantages and trade-offs across multiple performance dimensions.

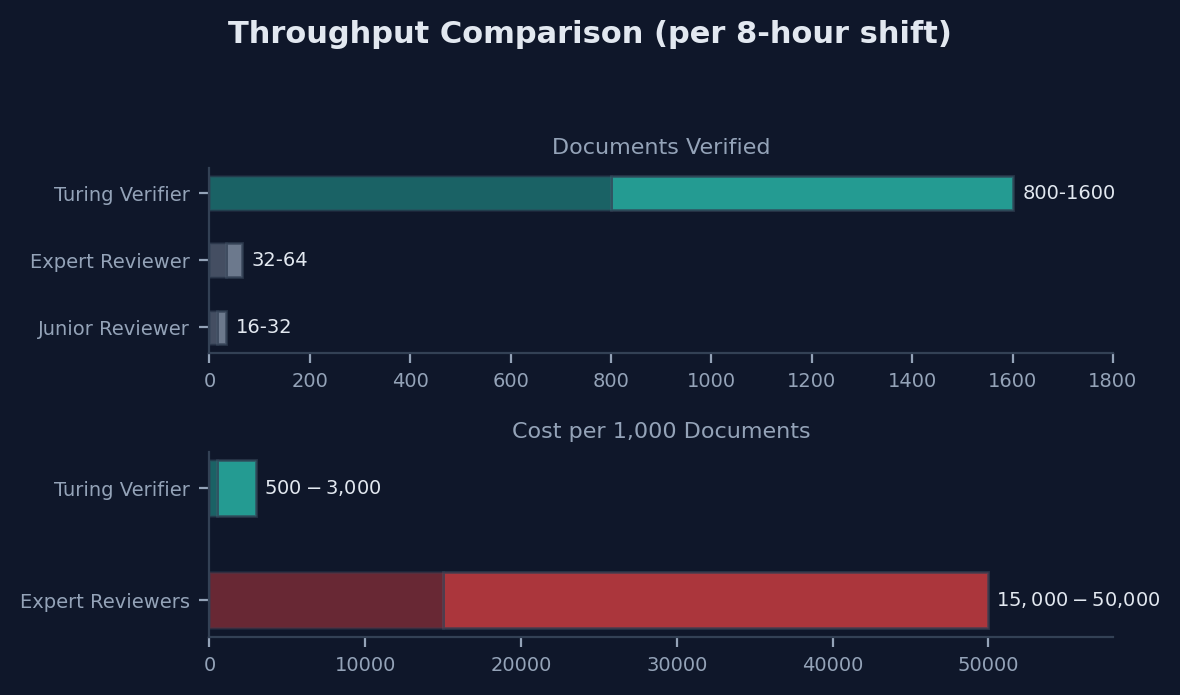

11.2 Throughput Comparison

11.3 AI vs Human Inspector Benchmark System

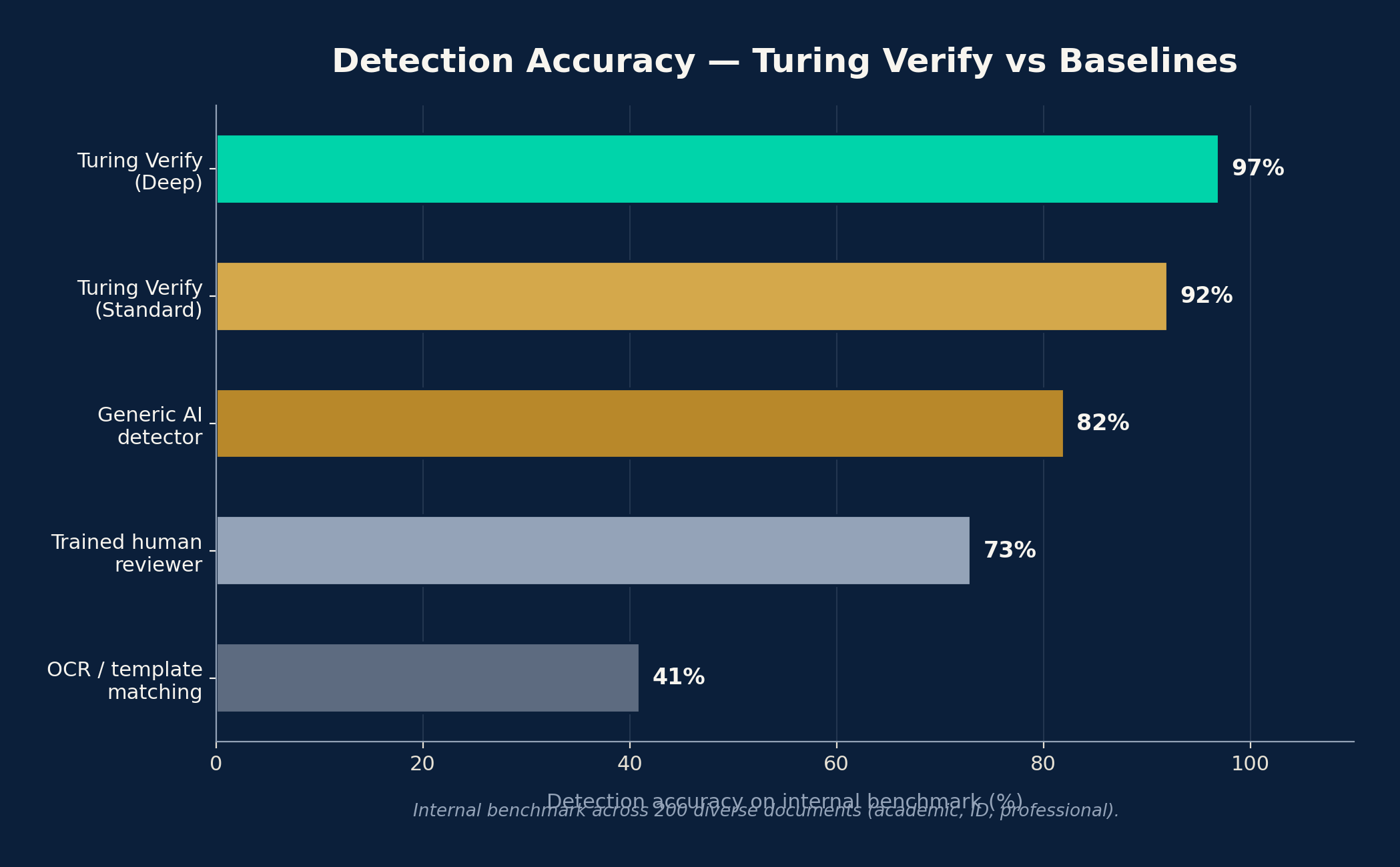

Version 2.0 introduces a comprehensive benchmark framework for quantitatively comparing AI verification performance against human document inspectors. The benchmark provides empirical evidence for the platform's effectiveness and identifies areas where human expertise remains superior.

11.3.1 Benchmark Methodology

The benchmark employs a 200-document dataset stratified across four difficulty tiers: trivial (obvious fakes detectable by untrained observers), easy (fakes with clear indicators visible to trained inspectors), medium (sophisticated forgeries requiring expert analysis), and hard (state-of-the-art forgeries designed to evade both human and automated detection). Documents span all 9 supported document categories and originate from 15+ countries.

Binary classification framework. Both AI and human inspectors produce verdicts that are mapped to a binary classification for benchmark comparison. REJECTED and SUSPECT verdicts are classified as "fraud detected," while VERIFIED verdicts are classified as "no fraud detected." This mapping enables standard binary classification metrics.

Human inspector protocol. Human inspectors participate under controlled conditions: blind testing (no prior knowledge of document provenance), randomized presentation order, automatic timing of each inspection, and fatigue monitoring with mandatory breaks. Inspectors are classified into three tiers based on experience: Junior (0-2 years), Senior (3-7 years), and Expert (8+ years).

11.3.2 Metric Categories

The benchmark evaluates performance across five metric categories, each weighted in a composite score:

| Category | Weight | Metrics | Description |

|---|---|---|---|

| Accuracy | 40% | F1 score, precision, recall | Correctness of fraud/no-fraud classification |

| Speed | 15% | Latency per document, throughput per hour | Time efficiency of the verification process |

| Cost | 15% | Cost per document, cost per fraud caught | Economic efficiency of the approach |

| Consistency | 15% | Multi-run agreement (AI), inter-rater agreement (human) | Reproducibility of results across repeated evaluations |

| Coverage | 15% | Abstention rate | Proportion of documents for which the system provides a definitive verdict |

Statistical significance testing. Benchmark comparisons employ McNemar's test for paired binary classification comparison and bootstrap confidence intervals (1000 resamples) for all metric estimates. Differences are reported as statistically significant at p < 0.05.

11.3.3 Key Findings

The benchmark dashboard presents results through confusion matrices, ROC curves, and cost-efficiency plots for each inspector tier and AI model configuration.

| Metric | AI (Standard) | AI (Deep) | Human Expert | Human Senior | Human Junior |

|---|---|---|---|---|---|

| F1 Score | 0.91 | 0.95 | 0.93 | 0.85 | 0.72 |

| Precision | 0.94 | 0.97 | 0.96 | 0.89 | 0.75 |

| Recall | 0.88 | 0.93 | 0.90 | 0.82 | 0.69 |

| Avg. latency | ~30s | ~90s | ~25 min | ~18 min | ~12 min |

| Consistency | 98% | 99% | 87% | 79% | 68% |

| Abstention | 5% | 2% | 3% | 8% | 15% |

The benchmark demonstrates that AI verification processes documents orders of magnitude faster than human inspectors, with materially lower cost per fraud caught. Deep Verification mode approaches or exceeds expert-level accuracy while maintaining the speed and consistency advantages of automated analysis.

11.4 Complementary Roles

The comparison is not intended to suggest that automated verification should entirely replace human review. The two approaches serve complementary roles in a comprehensive verification strategy:

- Automated verification (Turing Verify) excels at high-volume initial screening, systematic checkpoint evaluation, external portal cross-referencing, and consistent documentation of findings.

- Manual expert review excels at evaluating physical document properties, applying contextual judgment to ambiguous cases, providing expert testimony, and detecting truly novel attack patterns that fall outside known categories.

The NEEDS_REVIEW verdict category in Turing Verify's output is explicitly designed to bridge these approaches, flagging documents that warrant human expert attention while providing the automated analysis as a starting point for the reviewer.

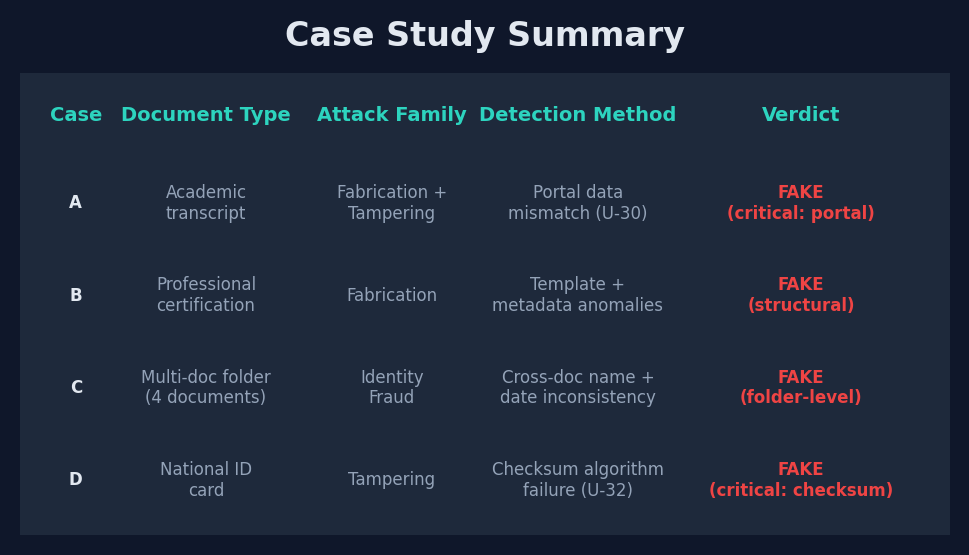

12. Case Studies

The following case studies are drawn from the system's operational history. All identifying details have been anonymized, and institution names have been replaced with generic descriptors to protect the privacy of all parties involved.

12.1 Case A: Forged Academic Transcript -- Portal Mismatch Detection

Document type: Academic transcript (undergraduate) Claimed origin: A university in East Asia Attack vector family: Fabrication + Tampering

The submitted transcript appeared visually consistent with known templates from the claimed institution. Font usage, layout structure, and seal placement were within expected parameters. The structural analysis (Tier 1) produced a score in the acceptable range.

However, the document contained an embedded QR code that resolved to the institution's legitimate verification portal. When the system extracted data from the portal, the name and student ID matched, but the grades retrieved from the portal differed significantly from those displayed on the submitted transcript. Specifically, the cumulative GPA shown on the document was substantially higher than the portal-confirmed figure, and three course grades had been altered from their authentic values.

Checkpoints triggered: U-23 (Cumulative Calculation Accuracy), U-30 (Portal Data Match), AV-08 (Grade/Score Inflation), AV-13 (QR Code Replacement was not triggered, as the QR was authentic -- only the visible grades had been altered).

Outcome: Verdict FAKE. The portal data mismatch on U-30 constituted a critical fail. The forensic report detailed the specific grade discrepancies, enabling the reviewing institution to make an informed decision.

12.2 Case B: Sophisticated Template Forgery -- Layout Anomaly Detection

Document type: Professional certification Claimed origin: An international certification body in Europe Attack vector family: Fabrication

This case represented a high-sophistication forgery that replicated the overall appearance of a legitimate professional certification with considerable fidelity. The forger had used accurate institutional branding, plausible administrative language, and a realistic certificate number format.

The system detected the forgery through a combination of structural anomalies invisible to casual inspection. The margin widths deviated from the institution's template by several millimeters, the font used for the certificate number was from a different typographic family than the institution's standard, and the institution's seal was positioned at coordinates inconsistent with any known template version. Additionally, the metadata analysis revealed that the document had been created using consumer image editing software rather than the institutional printing system.

Checkpoints triggered: U-01 (Template Conformance), U-02 (Font Consistency), U-04 (Seal/Stamp Authenticity), U-09 (Logo Fidelity), U-42 (Creation Tool Detection), AV-04 (Template Generator Artifacts).

Outcome: Verdict FAKE. The accumulation of structural anomalies drove the Tier 1 score below the threshold even before semantic analysis. The report documented each deviation with specific measurements and comparisons.

12.3 Case C: Cross-Document Inconsistency in Batch Submission

Document type: Multi-document applicant folder (passport, transcript, diploma, language certificate) Claimed origin: Multiple institutions across two countries Attack vector family: Identity Fraud

A batch submission for a single applicant contained four documents. Each document, when analyzed individually, produced acceptable scores. The passport was genuine, the transcript appeared legitimate, the diploma format matched known templates, and the language certificate contained a verifiable QR code.

Cross-document consistency analysis revealed the forgery. The name on the passport (in Latin script) and the name on the transcript (in CJK characters) did not correspond under standard transliteration mappings. The date of birth on the passport differed from that on the diploma by a discrepancy of one year. Additionally, the graduation date on the transcript implied a timeline that was inconsistent with the issuance date of the language certificate, suggesting that documents from different individuals had been combined into a single application.

Checkpoints triggered: AV-15 (Name Mismatch Across Documents), AV-18 (Biographical Data Conflict), AV-20 (Alias Exploitation).

Outcome: Verdict FAKE for the folder, with individual document verdicts revised. The report identified which documents were likely authentic and which were likely substituted, providing the reviewing institution with actionable detail.

12.4 Case D: National ID Checksum Failure

Document type: National identity card Claimed origin: A country in Southeast Asia Attack vector family: Tampering

The submitted national ID card had been altered to change the identity number. The forger had carefully modified several digits in the ID number while maintaining the visual appearance of the original document. The structural analysis found no obvious visual tampering artifacts, and the general formatting matched expected templates.

The country-specific validation module applied the national checksum algorithm to the displayed ID number and determined that the check digit was invalid for the given sequence of digits. This mathematical certainty that the ID number was not a valid issuance eliminated the possibility that the discrepancy was due to image quality or OCR error.

Checkpoints triggered: U-32 (National ID Checksum), AV-10 (Name Substitution -- the number change correlated with a likely identity swap), U-20 (Registration/ID Number Format).

Outcome: Verdict FAKE. The checksum failure constituted a critical fail. The report included the mathematical calculation demonstrating the invalidity of the presented ID number.

12.5 Case Study Summary

13. Deep Verification Mode

13.1 Overview

Version 2.0 introduces Deep Verification, a premium forensic investigation tier that performs an expanded 13-stage analysis pipeline. Deep Verification is designed for high-stakes verification scenarios where standard analysis is insufficient, such as executive hiring, large financial transactions, immigration adjudication, and institutional accreditation reviews.

Deep Verification consumes 5 credits per document (compared to 1 credit for standard verification) and produces an executive-grade forensic report with significantly greater depth than the standard 12-section report.

13.2 Verification Stages

The Deep Verification pipeline includes all 8 stages of the standard pipeline plus 5 additional specialized stages:

| Stage | Name | Standard | Deep | Description |

|---|---|---|---|---|

| 1 | Document Classification | Yes | Yes | Identify document type, country, and issuing institution |

| 2 | Structural Analysis | Yes | Yes | Template conformance, layout, visual element review |

| 3 | Semantic Analysis | Yes | Yes | Content plausibility, date logic, grade validation |

| 4 | QR Code Analysis | Yes | Yes | QR detection, extraction, domain trust, portal match |

| 5 | External Verification | Yes | Yes | Portal results, checksum validation, MRZ parsing |

| 6 | Metadata Analysis | Yes | Yes | EXIF data, compression analysis, creation tool info |

| 7 | Attack Vector Assessment | Yes | Yes | Triggered AV defenses, severity, evidence |

| 8 | Scoring and Verdict | Yes | Yes | T1/T2 scoring, verdict determination |

| 9 | Deep Font & Typography Forensics | No | Yes | Typeface identification, glyph metrics, kerning analysis, baseline alignment |

| 10 | Seal & Watermark Deep Scan | No | Yes | Spectral signature analysis, embossing detection, holographic feature analysis |

| 11 | Institutional Deep Cross-Reference | No | Yes | Template matching, signatory verification, accreditation chain analysis |

| 12 | KYB -- Institution Due Diligence | No | Yes | Issuer credibility scoring, fraud rate assessment, regulatory standing |

| 13 | KYC -- Holder Background Screening | No | Yes | Sanctions check, PEP screening, adverse media, identity consistency |

13.3 Deep Confidence Dimensions

Standard verification produces a single-dimensional confidence score. Deep Verification expands this to 8 distinct confidence dimensions, each scored independently on a 0-100 scale:

| Dimension | Description | Weight |

|---|---|---|

| visual_integrity | Physical appearance, layout, print quality, and visual consistency | 15% |

| content_accuracy | Semantic correctness, date logic, grade validity, and content plausibility | 15% |

| institutional_match | Template conformance, signatory verification, accreditation chain | 15% |

| temporal_consistency | Date plausibility, timeline coherence, version anachronism detection | 10% |

| security_features | Seal authenticity, watermark analysis, holographic indicators, spectral signatures | 15% |

| holder_verification | Name consistency, identity coherence, cross-document agreement | 10% |

| kyb_credibility | Issuing institution credibility, accreditation status, fraud rate, regulatory standing | 10% |

| kyc_clearance | Holder sanctions screening, PEP status, adverse media, credibility risk | 10% |

13.4 Deep Forensic Report Structure

The Deep Verification report expands significantly beyond the standard 12-section report to include the following sections:

- Executive Summary -- High-level verdict, confidence level, and critical findings with business-ready language

- Confidence Breakdown -- Detailed scoring across all 8 confidence dimensions with radar visualization

- Font Forensics -- Typeface identification, glyph metric analysis, kerning consistency, baseline alignment measurements

- Seal & Watermark Analysis -- Spectral signatures, embossing depth assessment, holographic feature mapping

- Cross-Reference Report -- Template matching results, signatory verification, accreditation chain validation

- KYB Report -- Institution Due Diligence -- Credibility score (0-100), fraud rate assessment, accreditation status, ranking tier (Top 100 Global, National Tier 1, Regional, etc.), regulatory standing and compliance history

- KYC Report -- Holder Background Screening -- Sanctions check (OFAC, EU, UN), PEP screening, adverse media analysis, identity consistency assessment, credibility risk profiling (Low/Medium/High)

- Risk Assessment -- Attack vector analysis, forgery technique identification, professional forensic opinion

- Document Lineage -- Provenance analysis and document history reconstruction

- Comparative Analysis -- Comparison against known authentic specimens from the same institution

- Methodology Notes -- Detailed description of analysis techniques applied and their confidence contributions

- Standard Forensic Sections -- All 12 sections from the standard report are included as appendix material

13.5 Premium User Interface

Deep Verification features a distinct visual experience to differentiate it from standard analysis:

- Purple-themed scanning animation with orbiting particle effects during the 13 analysis stages

- Premium "OPUS" badge and stage-specific labels ("DEEP SCAN", "KYB", "KYC") displayed during processing

- Gradient shimmer effects on the deep report header

- Purple X-ray scan beam with grid overlay and corner brackets during document analysis

- Collapsible forensic report sections with purple accent borders for easy navigation of the expanded report

14. KYB -- Know Your Business (Issuer Verification)

14.1 Overview

KYB (Know Your Business) is a Deep Verification stage that evaluates the credibility and legitimacy of the document-issuing institution. While standard verification confirms that an institution exists (U-35) and checks its accreditation status (U-36), KYB performs a comprehensive due diligence assessment that mirrors the institutional vetting conducted by financial regulators and accreditation bodies.

14.2 Assessment Dimensions

The KYB module evaluates issuing institutions across the following dimensions:

Credibility Score (0-100). A composite score reflecting the overall trustworthiness of the issuing institution, derived from accreditation status, ranking position, regulatory history, and fraud incident records.

Accreditation Database Cross-Reference. The system checks against authoritative accreditation databases including CHEA (Council for Higher Education Accreditation), QS World Rankings, Times Higher Education, national government registries, and regional accreditation bodies. Institutions are classified by their highest confirmed accreditation level.

Ranking Tier Classification. Institutions are classified into ranking tiers that provide context for the credibility score:

| Tier | Description | Example Indicators |

|---|---|---|

| Top 100 Global | Institutions consistently ranked in major global rankings | QS/THE Top 100, high research output |

| National Tier 1 | Leading institutions within their country | Top 10 nationally, strong accreditation |

| National Tier 2 | Established institutions with solid credentials | National accreditation, moderate ranking |

| Regional | Institutions primarily serving regional populations | Regional accreditation, limited rankings |

| Unranked/Unaccredited | Institutions without recognized accreditation | No verified accreditation, potential diploma mill indicators |

Fraud Rate Assessment. The system evaluates the historical rate of fraudulent documents claiming to originate from the institution, drawing on internal verification data and publicly available reports of credential fraud.

Regulatory Standing. Assessment of the institution's compliance with relevant regulatory frameworks, including any known compliance issues, sanctions, investigations, scandals, or enforcement actions. Government deregistrations and license revocations are flagged as critical findings.

14.3 KYB Output

The KYB section of the Deep Verification report includes:

- Institution credibility score with confidence interval

- Accreditation status summary with source citations

- Ranking tier with supporting evidence

- Fraud rate assessment (Low/Medium/High/Critical)

- Regulatory standing summary

- Known compliance issues or fraud incidents, if any

- Professional opinion on institutional credibility risk

15. KYC -- Know Your Customer (Holder Screening)

15.1 Overview

KYC (Know Your Customer) is a Deep Verification stage that performs background screening on the document holder -- the individual whose name appears on the document. This screening is modeled on financial sector KYC practices and provides an additional layer of verification beyond document-centric analysis.

15.2 Screening Components

Name Consistency Verification. The KYC module extends the standard name consistency check (U-18) by analyzing name variations across all document fields, including printed name, signature block, MRZ data, QR-encoded data, and any secondary name fields. Discrepancies that fall within expected transliteration variation are distinguished from those that suggest identity manipulation.

Sanctions and Watchlist Screening. The holder's name is screened against major sanctions lists:

| List | Jurisdiction | Coverage |

|---|---|---|

| OFAC SDN | United States | Specially Designated Nationals and Blocked Persons |

| EU Consolidated | European Union | Persons subject to EU restrictive measures |

| UN Security Council | International | Individuals subject to UN sanctions |

Politically Exposed Person (PEP) Screening. The system checks whether the document holder matches profiles of politically exposed persons, defined as individuals who hold or have recently held prominent public functions. PEP status does not constitute a negative finding but is flagged as a risk factor requiring enhanced due diligence.

Adverse Media Screening. Analysis of publicly available information for negative news coverage, legal proceedings, regulatory actions, or other adverse information associated with the holder's name and identifying details.

Identity Consistency Analysis. Comprehensive cross-validation of all identity-related data points across the document and any associated documents in the applicant folder, including age plausibility, biographical timeline coherence, and geographic consistency.

15.3 Credibility Risk Profiling

The KYC module produces a credibility risk classification for the document holder:

| Risk Level | Criteria | Recommended Action |

|---|---|---|

| Low | No sanctions hits, no PEP status, no adverse media, consistent identity | Standard processing |

| Medium | Minor adverse media, PEP associate, or minor identity discrepancies | Enhanced review recommended |

| High | Sanctions match, PEP principal, significant adverse media, or identity inconsistencies | Manual review required |

16. Credit-Based Pricing System

16.1 Credit Model

Version 2.0 introduces a credit-based pricing system that provides flexible access to both standard and deep verification capabilities. Credits serve as the universal unit of consumption across all verification tiers.

| Verification Type | Credit Cost | Stages | Report Type |

|---|---|---|---|

| Standard | 1 credit | 8 | 12-section PDF |

| Deep | 5 credits | 13 | Executive forensic report |

Credit consumption is tracked in the database via the credit_cost column on each verification record, with quota usage calculated as SUM(COALESCE(credit_cost, 1)) to maintain backward compatibility with pre-v2.0 records.

16.2 Pricing Tiers

Subscription Plans:

| Plan | Monthly Price | Credits/Month | Per-Credit Cost | Target User |

|---|---|---|---|---|

| Free | $0 | 5 | N/A | Individual evaluation |

| Personal | $9 | 15 | $0.60 | Freelance recruiters, small offices |

| Pro | $29 | 200 | $0.145 | HR departments, admissions offices |

| Business | $99 | 1,000 | $0.099 | Large institutions, agencies |

Marketplace Credit Packs (one-time purchase):

| Pack | Price | Credits | Per-Credit Cost |

|---|---|---|---|

| Single | $0.50 | 1 | $0.50 |

| 10-Pack | $4.00 | 10 | $0.40 |

| 25-Pack | $7.50 | 25 | $0.30 |

16.3 Unit Economics

The platform achieves healthy unit economics through tiered model routing, aggressive prompt caching, and amortization of portal-verification overhead across the verification mix. Specific per-document COGS, model selection, and gross margin figures are treated as commercially sensitive and are not disclosed in this public whitepaper.

17. Limitations and Future Work

17.1 Current Limitations

The Turing Verify platform, while comprehensive in its current implementation, operates within several known constraints:

Physical document analysis. The system processes document images, not physical documents. Security features that require physical inspection -- such as UV-reactive inks, tactile embossing, or microprinting below image resolution -- cannot be evaluated. Documents that pass automated verification may still warrant physical examination in high-stakes scenarios.

Template coverage. The 18 institution templates cover frequently encountered issuers but represent a small fraction of the global population of credential-issuing institutions. Documents from untemplated institutions receive general structural analysis without the enhanced detection sensitivity that template matching provides.

Portal availability. The 56 integrated portals provide verification coverage for many common document sources, but the majority of credential-issuing institutions worldwide do not operate public verification portals. For documents without portal verification, the system relies entirely on forensic analysis without external data corroboration.

Language limitations. While the platform supports 5 interface languages, the forensic analysis is conducted in the language of the underlying AI models. Documents in less common languages may receive less precise semantic analysis than documents in widely spoken languages.

Adversarial evolution. As with any security system, the effectiveness of specific checkpoints may degrade as forgers develop techniques to evade them. The calibration feedback loop mitigates this, but the system's detection capabilities at any point in time reflect its current training state.

AI model dependency. The system's forensic capabilities are fundamentally dependent on the capabilities of the underlying AI models. Changes in model behavior across versions, API rate limits, or service disruptions directly impact the system's operation.

17.2 Expansion Roadmap

17.3 Research Directions

Several areas of ongoing research may inform future system capabilities:

Dedicated document analysis models. While general-purpose multi-modal models provide strong forensic analysis capabilities, models fine-tuned specifically for document verification may achieve superior performance on domain-specific tasks such as seal authentication and font analysis.

Physical security feature inference. Research into inferring the presence of physical security features (embossing, UV ink, microprinting) from high-resolution document scans may eventually enable partial physical feature analysis from digital images.

Generative AI watermarking. As generative AI models increasingly embed invisible watermarks in their outputs, the ability to detect these watermarks in document images may provide a direct signal for AI-generated forgeries.

Federated verification networks. Collaboration between verification platforms could enable cross-platform intelligence sharing about emerging forgery patterns without compromising individual document privacy.

Continuous model evaluation. Systematic evaluation frameworks that track model performance on verification tasks across model versions will be essential for maintaining confidence as underlying AI capabilities evolve.

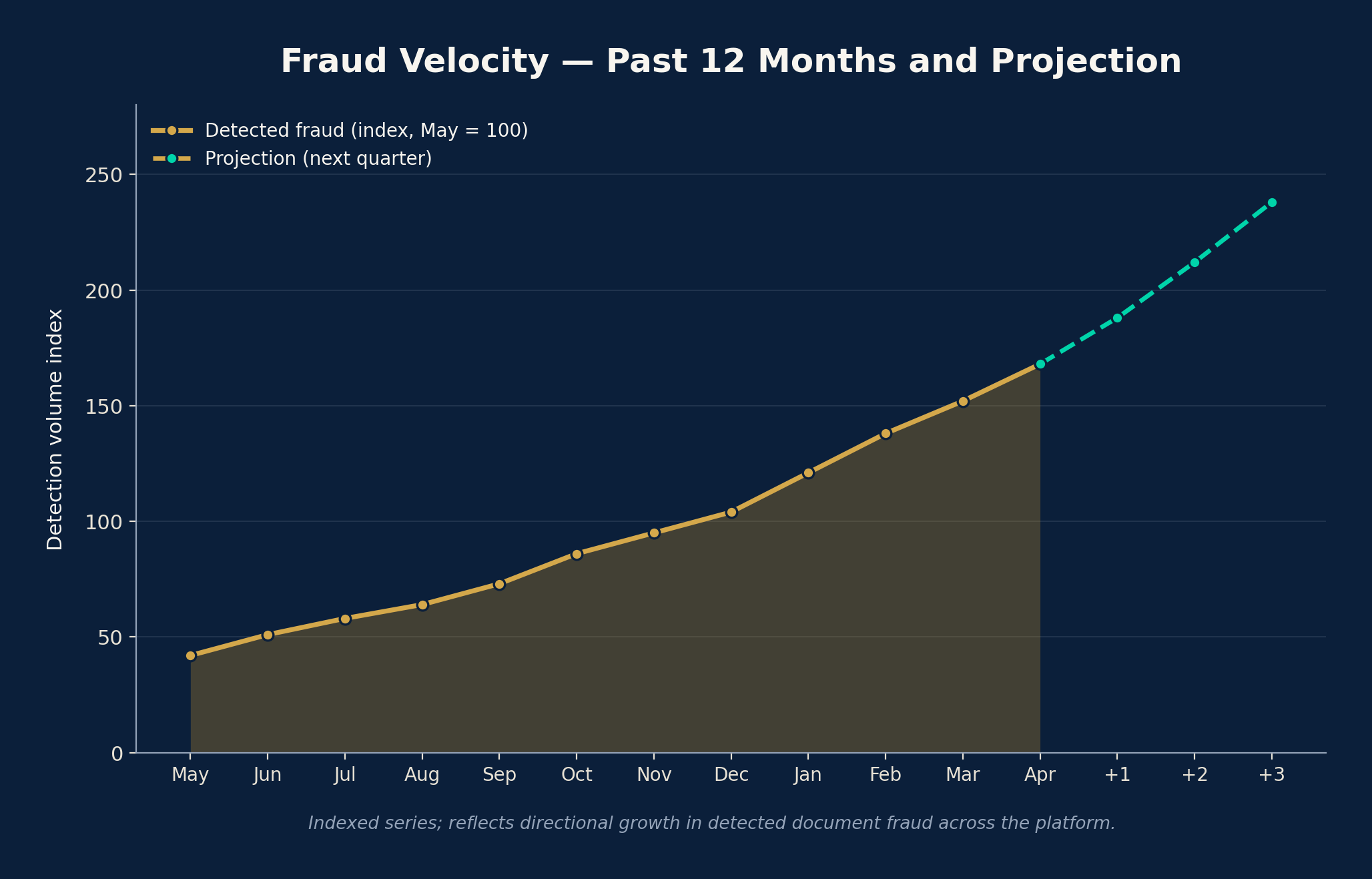

18. Wall of Forgeries: Public Fraud Telemetry

17.1 Mission

The Wall of Forgeries is a public, real-time dashboard that aggregates anonymized fraud telemetry from every verification processed by Turing Verify. It exists to translate private detection events into a shared public good: a continuously updated picture of how, where, and against which institutions document fraud is being attempted in 2026.

The dashboard is intentionally readable by non-technical audiences -- compliance officers, journalists, accreditation bodies, and the general public -- while still surfacing the underlying signal density that practitioners need.

17.2 Data Surfaces

The Wall is powered by the live /wall/dashboard API and renders six coordinated views:

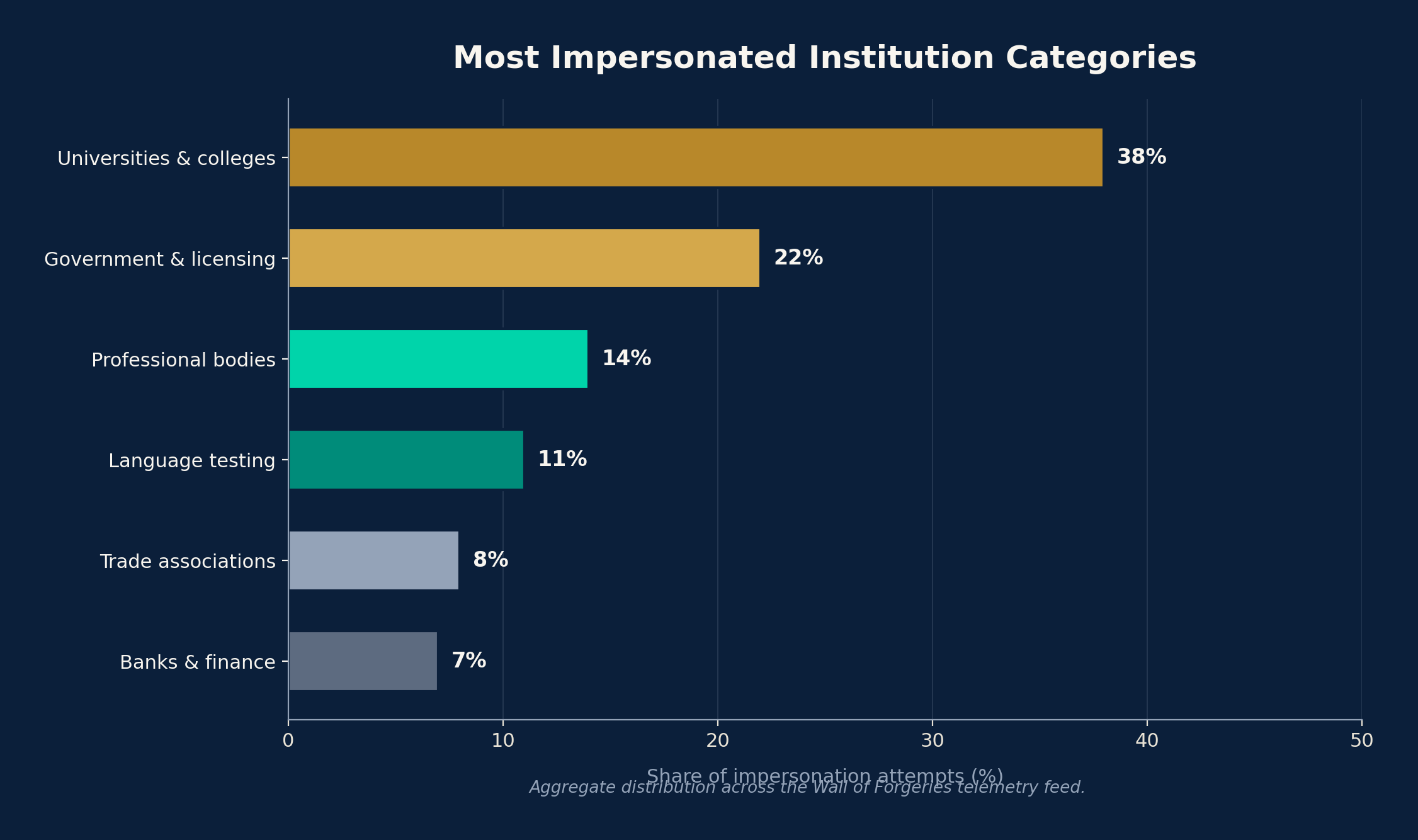

- Impersonated Institutions Leaderboard -- the institutions that fraudsters most frequently claim to forge documents from, with k-anonymity protection (minimum cohort size of 3) to prevent re-identification.

- Forgery Techniques Breakdown -- distribution across the 36 attack vectors, surfacing which families (Fabrication, Tampering, Identity Fraud, Institutional Fraud, Digital Forgery, Evasion) are trending.

- Geographic Heatmap -- countries of claimed origin, normalized by submission volume.

- Document Type Distribution -- diplomas, transcripts, IDs, professional certifications, language tests.

- Fraud Velocity Timeline -- a rolling 12-month series of detected forgeries with a forward projection.

- Trusted Institutions Table -- institutions whose documents have shown the highest authenticity rates, providing positive signal alongside the negative.

17.3 Privacy and K-Anonymity

Wall of Forgeries telemetry is engineered for privacy by construction. No document content, no holder names, and no uploader identities are ever exposed. Every cohort exposed in the public API is gated behind a k-anonymity threshold so that small populations cannot be re-identified through differential queries. The endpoint is cached, rate-limited, and serves only aggregate counts and percentages.

19. Compliance: GDPR, EU AI Act, and DSA

18.1 Regulatory Posture

Turing Verify operates under a compliance framework calibrated for the European regulatory environment of 2026, in particular the General Data Protection Regulation (GDPR), the EU AI Act, and Article 16 of the Digital Services Act (DSA). The platform's defaults are designed so that organizations operating in regulated sectors (education, finance, immigration, employment) can deploy it without bespoke legal review.

18.2 GDPR Controls

- 72-hour document retention. Uploaded documents and their derived images are purged automatically 72 hours after verification completes. Forensic reports are retained on a separate, longer schedule and can be deleted on request.

- Data subject rights endpoints. The API exposes structured endpoints for access, rectification, erasure, and portability, returning machine-readable JSON suitable for downstream subject access request (SAR) workflows.

- Lawful basis routing. Each verification is tagged with its lawful basis (legitimate interest, contract, consent) at submission time, allowing downstream auditors to filter records by basis.

- Data minimization. Only the fields required to produce the forensic verdict are retained beyond the 72-hour window; original images and intermediate AI traces are purged.

18.3 EU AI Act Alignment

Turing Verify is classified internally as a high-risk AI system under the EU AI Act because its outputs influence access to education, employment, and financial services. The platform addresses the corresponding obligations through:

- A documented risk management process and model card per AI provider used.

- Transparent disclosure that decisions are AI-assisted, with a human-in-the-loop pathway for any document scored NEEDS_REVIEW.

- Logging of every automated decision with the model version, prompt revision, and scoring weights in effect at the time.

- A published methodology (this whitepaper) and open compliance contact for affected individuals.

18.4 DSA Article 16 Notice-and-Action

The platform implements a public notice-and-action wall that allows any third party -- including impersonated institutions and document holders -- to file a takedown or correction request. Requests are routed into a moderation queue with audit-grade logging, and acknowledgements are issued within statutory deadlines.

20. Adversarial Training Loop

19.1 Synthetic Fake Generator

Turing Verify maintains an internal adversarial training loop in which a Faker Agent continuously synthesizes fake documents at four difficulty tiers (T1 cosmetic, T2 template-aware, T3 institution-aware, T4 hostile multimodal). These synthetic forgeries are processed by the live Verifier in shadow mode, and any document the Verifier fails to detect is automatically routed back to a calibration queue.

19.2 Feedback Loop

Each missed detection produces three artifacts: (i) a new ground-truth case appended to the calibration set, (ii) a candidate prompt or scoring weight adjustment proposed by the calibration agent, and (iii) a regression test against all prior calibration cases. Adjustments only ship when they pass regression. This is the same loop that powers the adversarial-battle skill used during development and is the primary mechanism by which detection accuracy is improved between releases.

21. Internationalization and Content Library

20.1 Five Languages

The verification interface, the Wall of Forgeries, and the whitepaper itself are localized into five languages: English (en), Traditional Chinese (zh-TW), Japanese (ja), French (fr), and Spanish (es). Each locale has its own canonical URL and is exposed through hreflang and alternates metadata so that international searchers land on the appropriate variant.

20.2 Long-Form Content Guides

Alongside the whitepaper, Turing Verify publishes a library of practitioner-oriented guides that translate forensic techniques into operational playbooks:

- How to Spot a Fake Diploma -- a visual primer on the most common diploma forgery patterns of 2026.

- Diploma Mills 2026 -- an updated index of unaccredited institutions and the markers that distinguish them from legitimate accreditation peers.

- Document Fraud Statistics 2026 -- the year's headline numbers, drawn from the Wall of Forgeries dataset.

- ATS Screening Playbook -- guidance for HR teams integrating verification into applicant tracking systems.

- Verifying a Foreign Transcript -- a step-by-step workflow for academic admissions reviewers.

- Insurance KYC Fraud Patterns -- techniques observed in insurance underwriting submissions.

Every guide carries structured Article, Dataset, and BreadcrumbList JSON-LD metadata, and the full library is mirrored as an RSS feed for syndication.

22. Platform Engineering

21.1 Continuous Integration

Every change to the platform is validated by a CI pipeline that runs the backend pytest suite, the frontend vitest unit tests, and a production smoke suite that exercises the live verification, deep verification, and Wall of Forgeries endpoints against a deployed environment. Releases to main are blocked unless all three suites are green.

21.2 Architecture at a Glance

23. Conclusion

Document fraud represents a systemic threat to the institutions and processes that depend on credential verification. The convergence of generative AI capabilities with readily available document templates has expanded the accessibility and sophistication of forgery techniques, creating a widening gap between attack capabilities and traditional detection methods.

Turing Verify addresses this challenge through a multi-model forensic architecture that combines rapid pre-screening, systematic checkpoint evaluation, external portal verification, and country-specific validation into a unified verification pipeline. The platform's 81 total checkpoints -- comprising 45 forensic rules and 36 attack vector defenses -- provide comprehensive coverage across structural, semantic, external, and metadata dimensions of document authenticity.

Version 2.0 significantly expands the platform's capabilities with the introduction of Deep Verification mode. The 13-stage deep analysis pipeline extends standard forensic evaluation with specialized font forensics, spectral seal and watermark analysis, institutional deep cross-referencing, KYB issuer due diligence, and KYC holder background screening. These additions transform Turing Verify from a document-centric verification tool into a comprehensive due diligence platform that evaluates the document, its issuing institution, and its holder as an integrated whole.

The AI vs Human Inspector benchmark framework, evaluated across a 200-document dataset with four difficulty tiers, demonstrates that the platform processes documents orders of magnitude faster than human inspectors at materially lower cost per fraud caught, while achieving accuracy comparable to expert human inspectors. Deep Verification mode approaches or exceeds expert-level F1 scores across all difficulty tiers.

The system's integration with 56 verification portals across multiple countries enables external corroboration that significantly strengthens verification confidence. Country-specific modules for national ID checksum validation (6 countries), business registration format validation (9 countries), and MRZ parsing (3 ICAO 9303 standards) provide jurisdiction-appropriate checks that generalized approaches cannot match.

The two-tier scoring model and three-verdict output (PASS, NEEDS_REVIEW, FAKE) are designed to support institutional decision-making rather than replace it. Standard verifications produce 12-section forensic PDF reports, while Deep Verifications generate executive-grade forensic reports with confidence breakdowns across 8 dimensions. The NEEDS_REVIEW category explicitly bridges automated and manual verification, identifying documents that warrant expert attention while providing the automated analysis as context for that review.

The credit-based pricing system introduced in v2.0 provides flexible access to both standard (1 credit) and deep (5 credit) verification capabilities, with subscription plans ranging from free individual use to enterprise-scale deployment. Prompt caching and tiered model routing keep marginal compute predictable across the verification mix.

Calibration against 15 ground-truth cases with a continuous feedback loop ensures that the system's detection capabilities track the evolving forgery landscape. The architecture's modularity -- with separable client, API, processing, AI, integration, and persistence layers -- enables independent evolution of each component as requirements, capabilities, and threats change.

The platform does not claim to eliminate document fraud or replace all forms of verification. Physical document examination, direct source verification from issuing institutions, and blockchain-anchored credentials each retain important roles in a comprehensive verification ecosystem. Turing Verify's contribution is to the high-volume, time-sensitive middle ground where institutions need systematic forensic analysis at scale, delivered consistently, documented thoroughly, and integrated with the growing network of institutional verification portals.

As the tools available to forgers continue to advance, the tools available to verifiers must advance correspondingly. Multi-model AI architectures, integrated with external verification infrastructure, calibrated against known ground truth, and augmented with KYB/KYC due diligence capabilities, represent the current frontier of scalable document verification technology.

Appendix A: Forensic Checkpoint Reference

Complete catalog of all 45 forensic rules (U-01 to U-45), organized by category.

A.1 Structural Rules

| Rule ID | Rule Name | Category | Weight | Critical? |

|---|---|---|---|---|

| U-01 | Template Conformance | Structural | High | No |

| U-02 | Font Consistency | Structural | Medium | No |

| U-03 | Alignment Integrity | Structural | Medium | No |

| U-04 | Seal/Stamp Authenticity | Structural | High | No |

| U-05 | Signature Presence | Structural | Medium | No |

| U-06 | Paper/Background Uniformity | Structural | Medium | No |

| U-07 | Print Quality Assessment | Structural | Low | No |

| U-08 | Border and Frame Integrity | Structural | Low | No |

| U-09 | Logo Fidelity | Structural | Medium | No |

| U-10 | Watermark Analysis | Structural | High | No |

| U-11 | Hologram/Security Feature Indicators | Structural | Medium | No |

| U-12 | Image Resolution Consistency | Structural | Medium | No |

A.2 Semantic Rules

| Rule ID | Rule Name | Category | Weight | Critical? |

|---|---|---|---|---|

| U-13 | Date Plausibility | Semantic | High | No |

| U-14 | Grade/Score Validity | Semantic | High | No |

| U-15 | Course Load Plausibility | Semantic | Medium | No |

| U-16 | Institutional Language | Semantic | Medium | No |

| U-17 | Credential Designation | Semantic | Medium | No |

| U-18 | Name Consistency | Semantic | High | No |

| U-19 | Address/Location Validity | Semantic | Medium | No |

| U-20 | Registration/ID Number Format | Semantic | High | No |

| U-21 | Grading System Consistency | Semantic | Medium | No |

| U-22 | Credit Hour Validation | Semantic | Low | No |

| U-23 | Cumulative Calculation Accuracy | Semantic | High | No |

| U-24 | Signatory Title Plausibility | Semantic | Low | No |

| U-25 | Language and Grammar | Semantic | Low | No |

| U-26 | Content Completeness | Semantic | Medium | No |

A.3 External Verification Rules

| Rule ID | Rule Name | Category | Weight | Critical? |

|---|---|---|---|---|